Minsky is a system dynamics program with a unique feature I invented that makes it easy to model monetary dynamics. Called a “Godley Table”, this lets you model financial flows using the accountant’s tool of double-entry bookkeeping.

Minsky is Open Source–which means (a) that it is free and (b) that both the program and its source code are freely available. Minsky has been programmed in C++ and Javascript. Its chief programmer is my friend and colleague Dr Russell Standish. In the Open Source tradition, we would welcome assistance from other programmers.

Minsky’s project pages are https://sourceforge.net/projects/minsky/ at SourceForge and https://github.com/highperformancecoder/minsky at Github.

This is the Latest version: SourceForge will work out your operating system (PC, Mac or Linux) and download the appropriate one. Sourcecode and beta versions are available at https://sourceforge.net/projects/minsky/files/.

A quick introduction to Minsky

This 40-page booklet gives a very fast overview of Minsky, and should be enough to get you going as a Minsky user:

Modelling with Minsky

This free book is a companion to The New Economics: A Manifesto. It explains how to use Minsky, how to build all the models in that book, and how you can use Minsky to build your own models. The current version, published on March 3rd 2022, is fairly complete, but there are deficiencies which I will attempt to address in further versions when time permits.

To download it, click on the “Download” link below rather than the hyperlink: the latter takes forever to load!

Minsky Models

These are the models used in The New Economics: A Manifesto.

This ZIP file contains the files used to generate the following figures in the book:

| Figure | Page | Description | File |

| Fig2-1 | 29 | Money enables the butter maker to buy a gun without the gun maker having to want butter | GoldAsMoney.mky |

| Fig2-2 | 32 | The State as the conduit for fiat money transfers where money is the State’s liability and physical gold its asset | GoldCertificatesAsMoneyGoldBacked.mky |

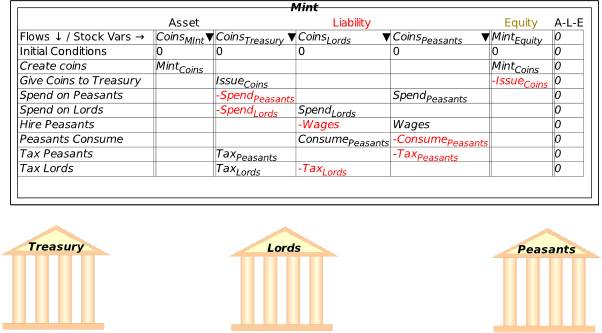

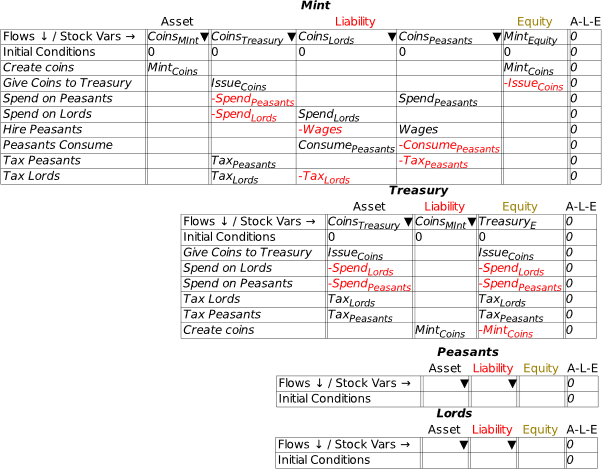

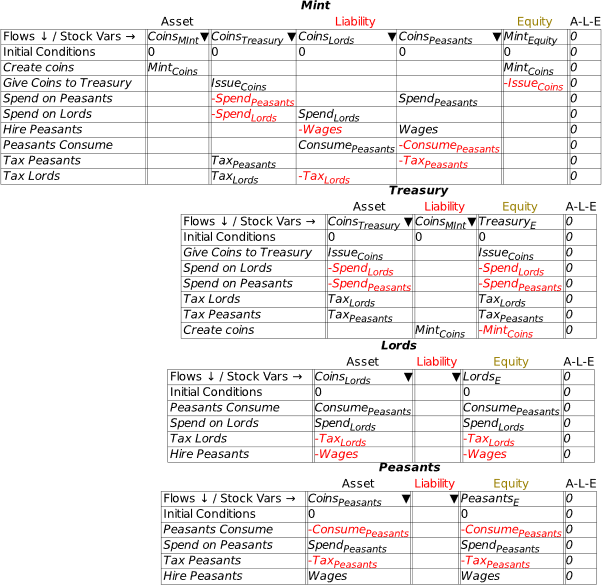

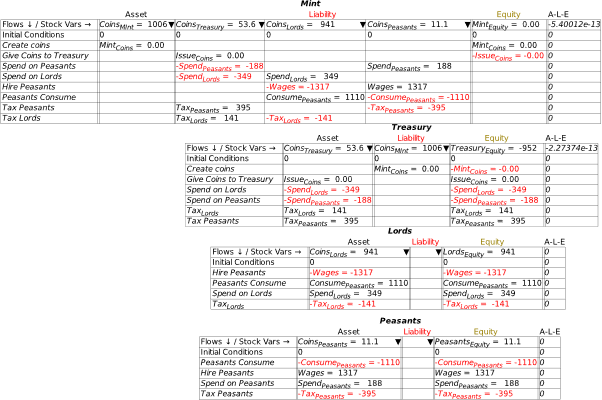

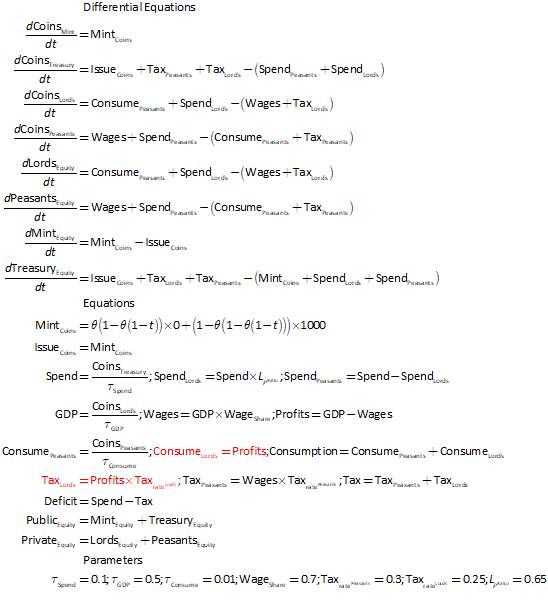

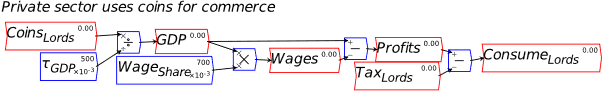

| Fig2-3 | 36 | Modelling the initiation of a monetary economy in Minsky–Godley Tables | DesanOffaInitiationOfFiatMoney.mky |

| Fig2-4 | 38 | Modelling the initiation of a monetary economy in Minsky–Plots | DesanOffaInitiationOfFiatMoney.mky |

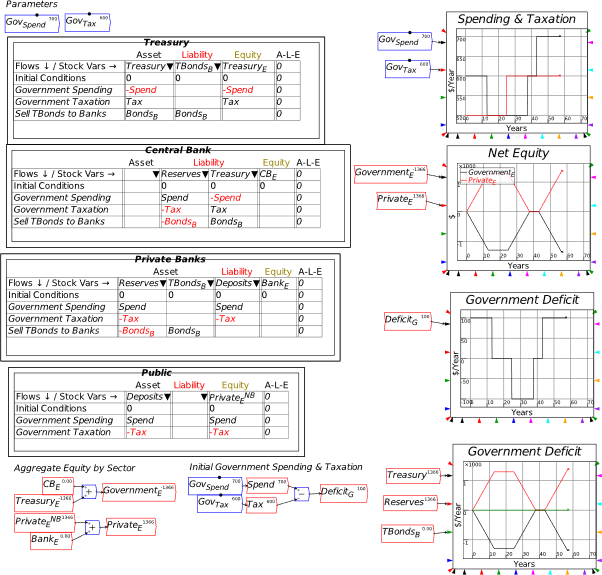

| Fig2-5 | 41 | The fundamental monetary operations of the government | ModernFiatMoney.mky |

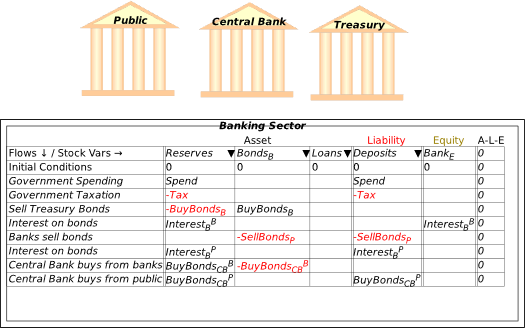

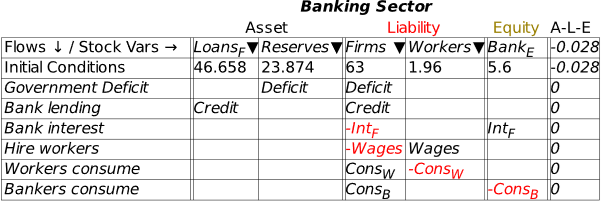

| Fig2-10 | 61 | The banking sector’s view of a mixed fiat-credit economy–Godley Table | SurplusCausesDepression.mky |

| Fig2-11 | 62 | An integrated view of government deficits and private sector credit | SurplusCausesDepression.mky |

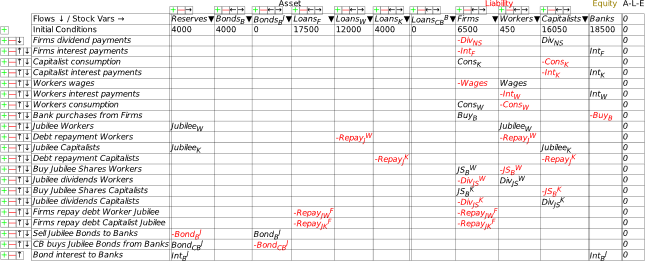

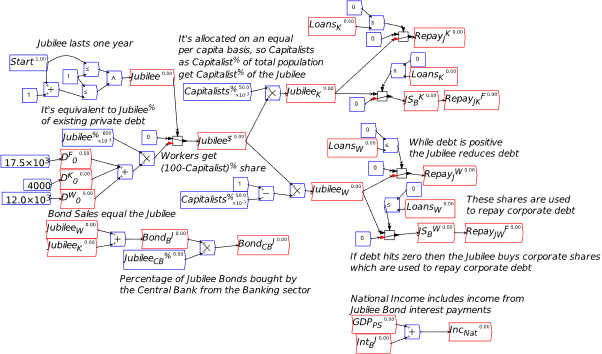

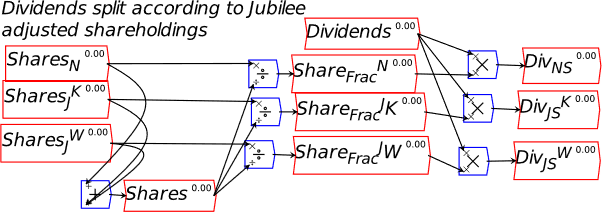

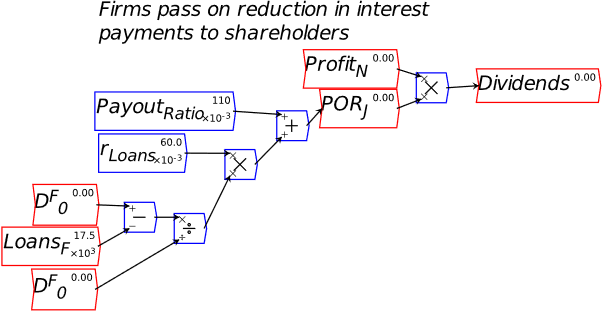

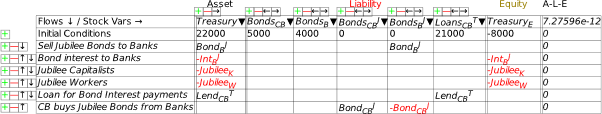

| Fig2-13 | 67 | Accounting for a Modern Debt Jubilee | BasicModernDebtJubilee.mky |

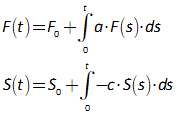

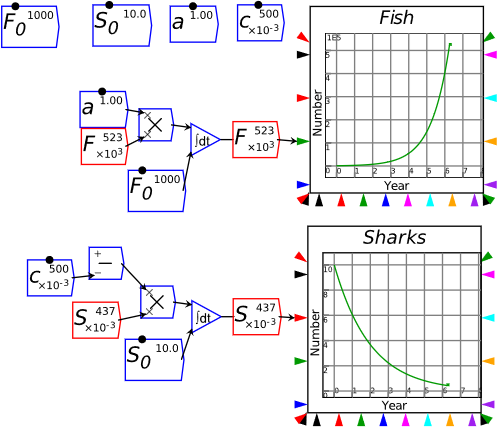

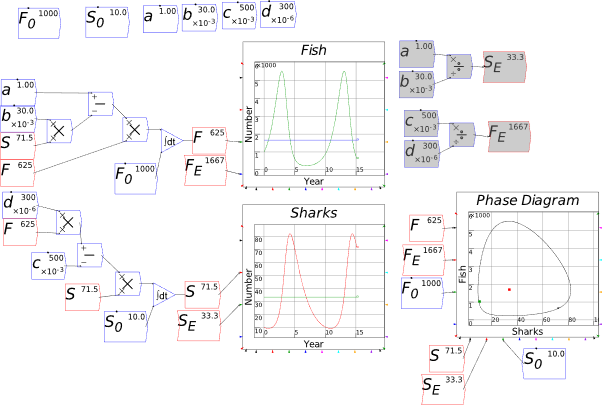

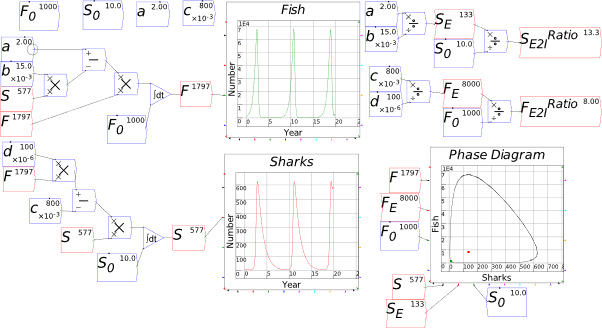

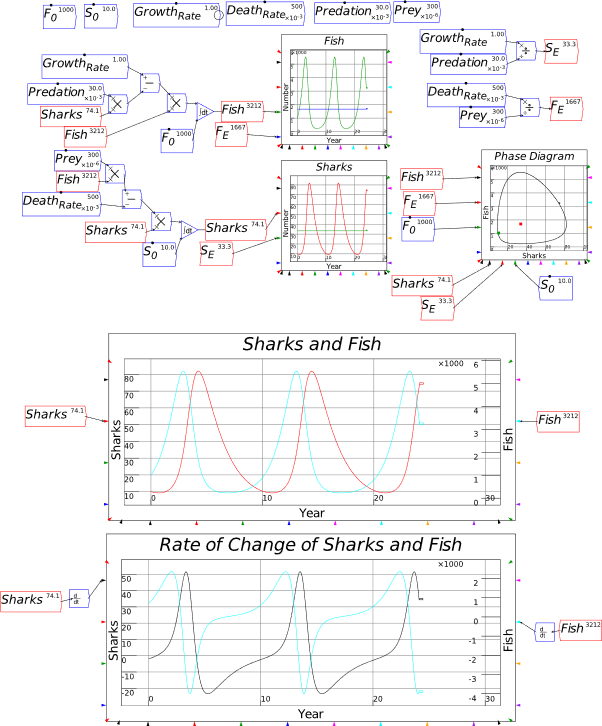

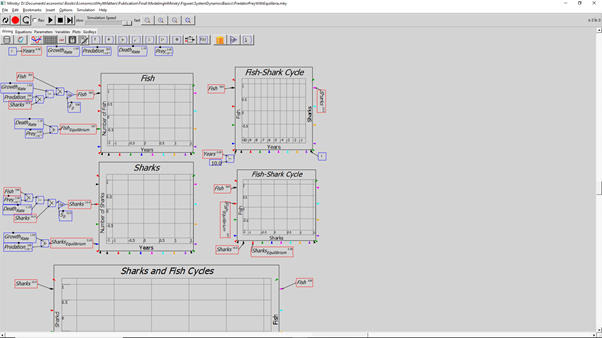

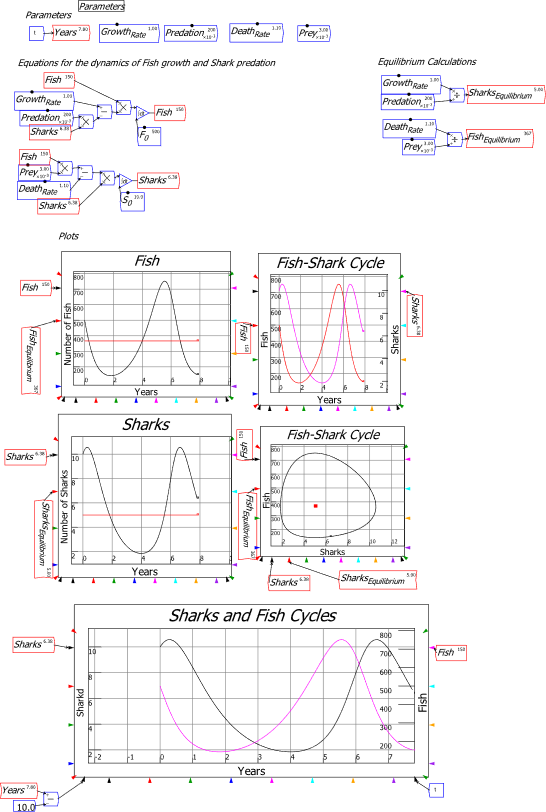

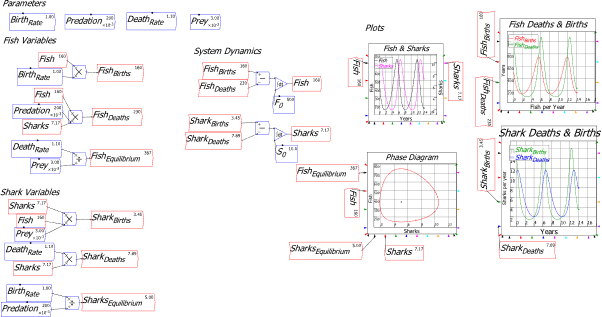

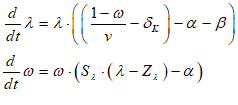

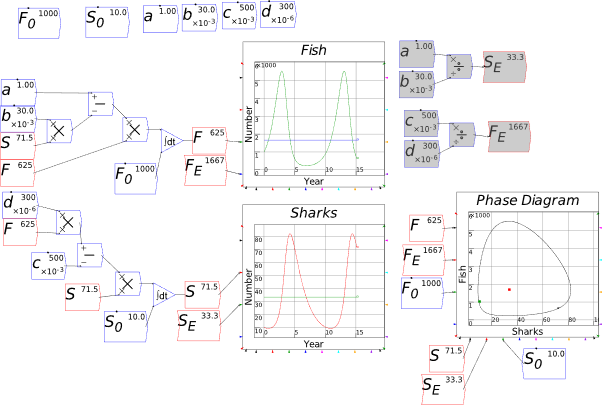

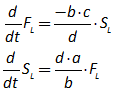

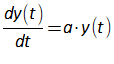

| Fig3-2 | 78 | A predator-prey model in Minsky, using sharks and fish | PredatorPrey.mky |

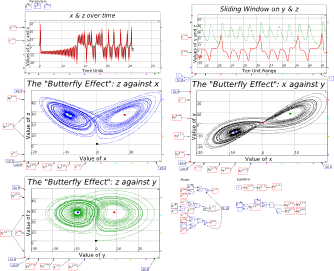

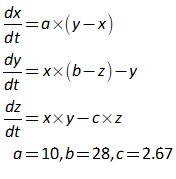

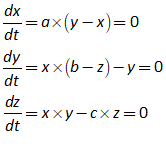

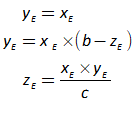

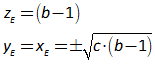

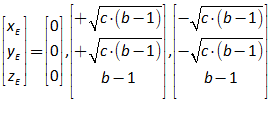

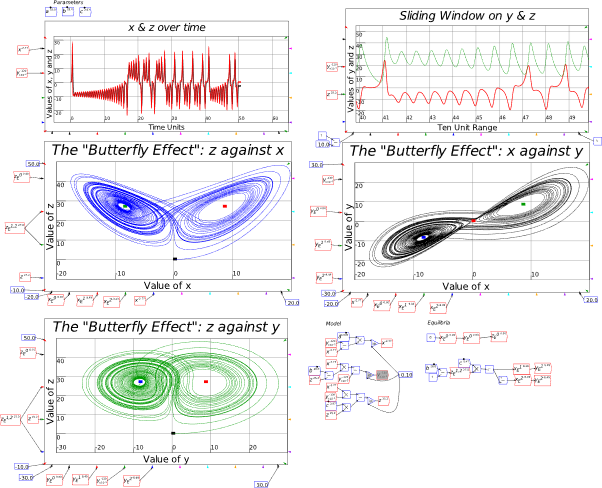

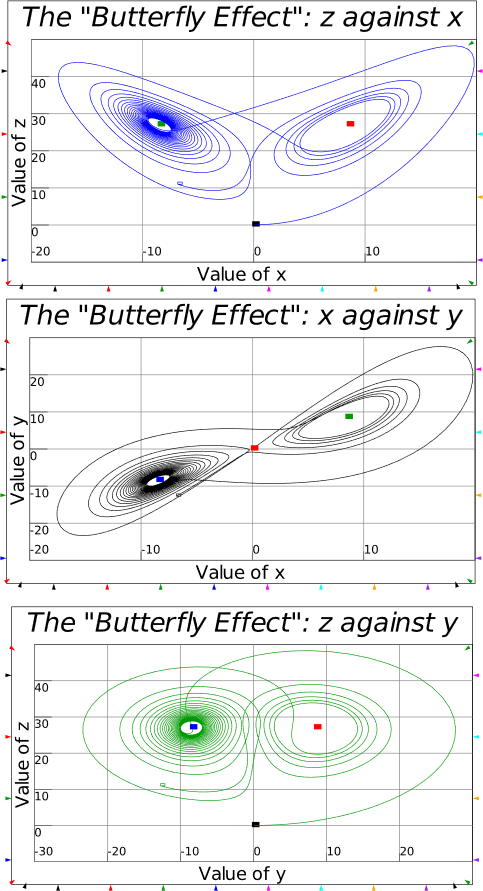

| Fig3-3 | 80 | Lorenz’s model of aperiodic cycles in the weather | Lorenz.mky |

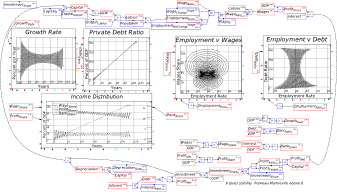

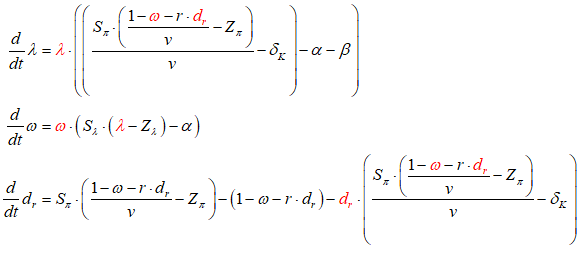

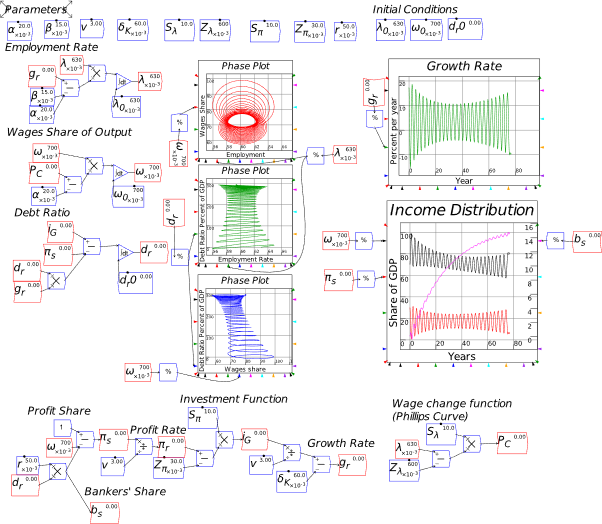

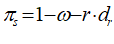

| Fig3-4 | 86 | The Keen-Minsky model and the ‘intermittent route to chaos’ | KeenMinskyModel.mky |

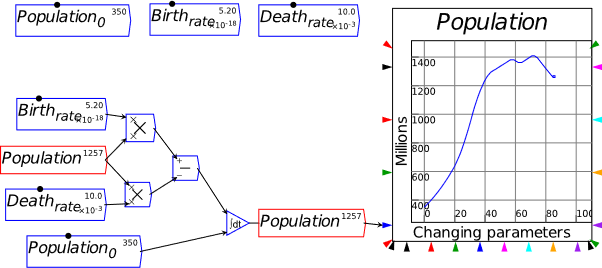

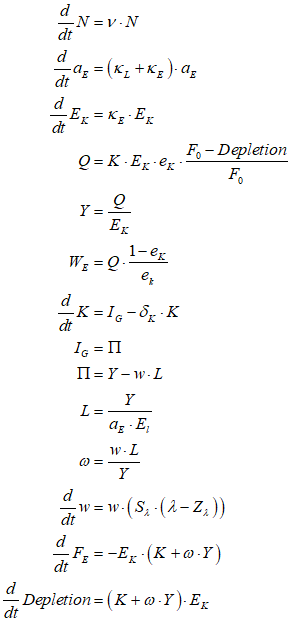

| Fig4-3 | 108 | A simple energy-based model with resource depletion and waste production | GoodwinResourceDepletion.mky |

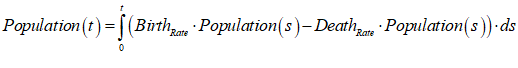

| Fig6-1 | 147 | Simple population growth as an integral equation in Minsky | PopulationGrowth.mky |

| Pedro Pratas’ model of the Portuguese economy | Portugal.mky |

The free online companion to The New Economics: A Manifesto

Third Release: March 3rd 2022

© Steve Keen

Table of Contents

2 Introduction: A Manual with Attitude 6

4 Understanding money: “Minsky for Dummies” 13

4.4 A significant extension: Nonfinancial Assets 34

4.4.1 Ab initio creation of banks 35

4.4.2 Financial Assets and Bubbles in Nonfinancial Asset valuation 37

5.2 Multiple copies of variables and parameters 49

5.2.1 A Keen Rant: Rehabilitating Bill Phillips 49

5.4 Building a “Phillips Curve” in Minsky 55

6.2.2 Using intermediate variables 72

6.3.1 The Equations, Parameters, Variables, Plots and Godleys Tabs 74

6.4 Exporting and importing a model 75

6.5 A Keen Rant: How not to handle time 76

6.7 Integrals versus differentials 80

6.8 A first model, done two ways 80

7.1 Creating a Godley Table 91

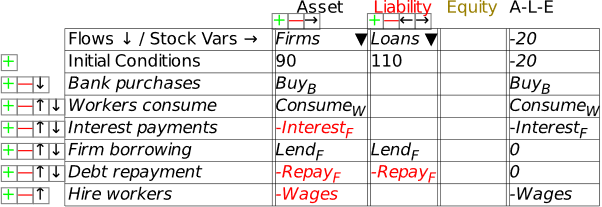

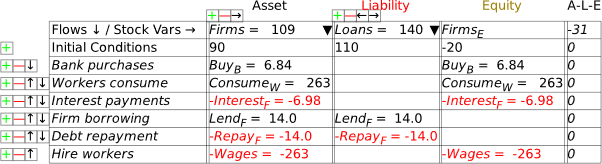

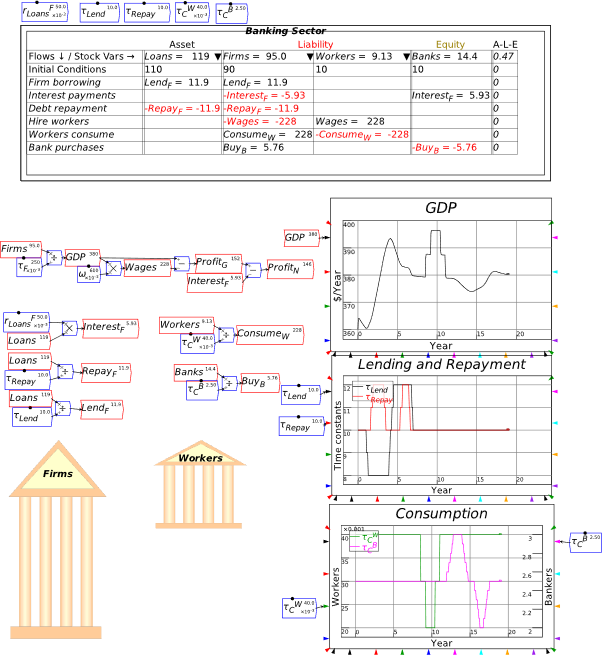

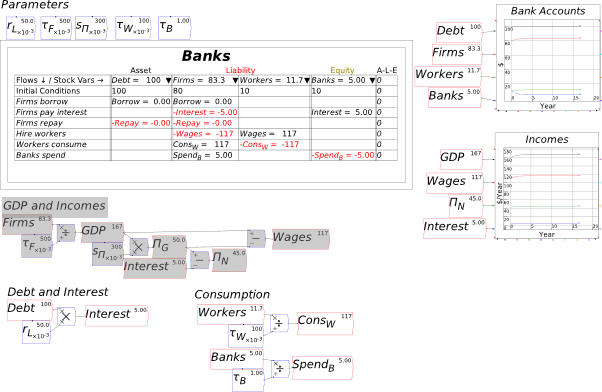

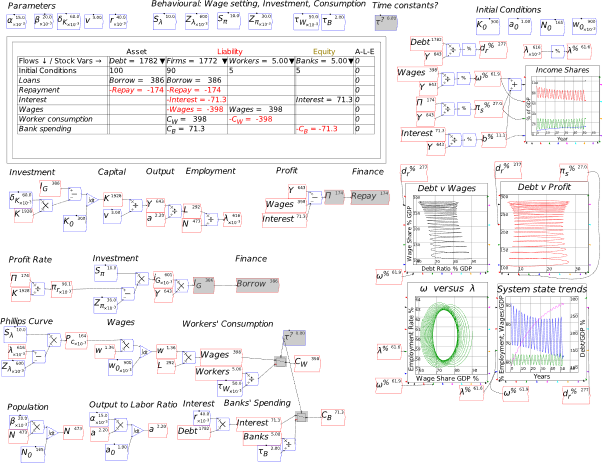

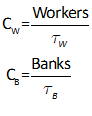

7.2 The simplest possible monetary model of a pure credit economy 92

7.3 Defining the flow elements of a Godley Table 95

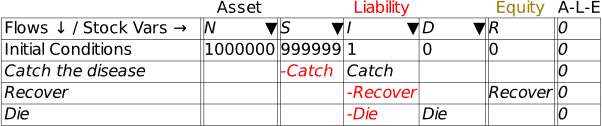

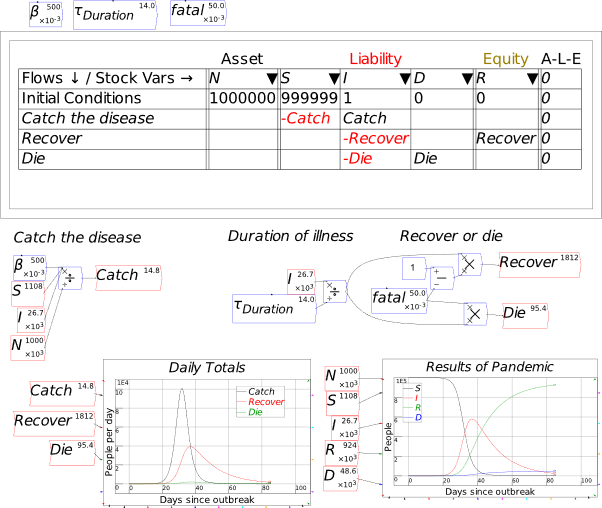

7.4 Getting creative with Godley: Modelling the pandemic 107

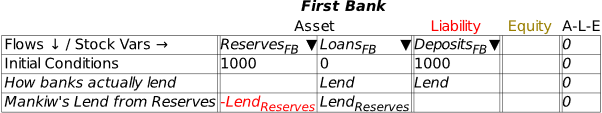

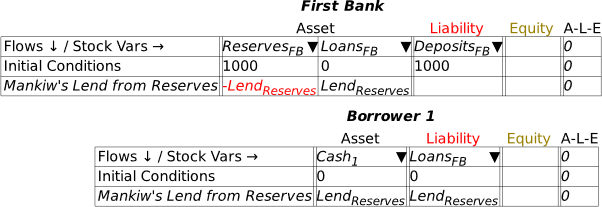

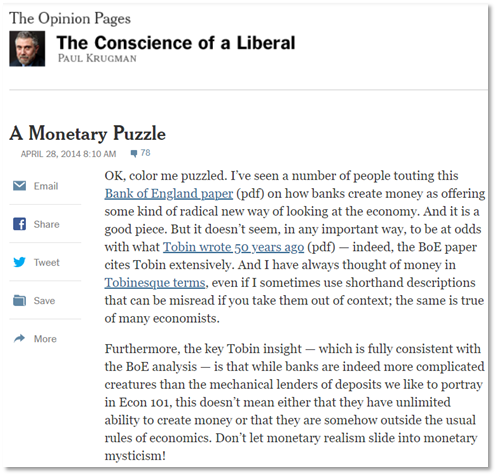

7.5 A Keen Rant: Using Minsky to Revisit the Keen-Krugman Debate 111

7.6 A Mixed Godley Table-Flowchart model 125

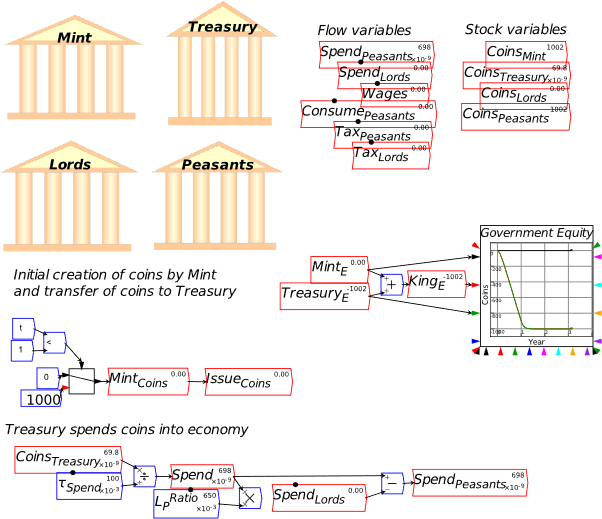

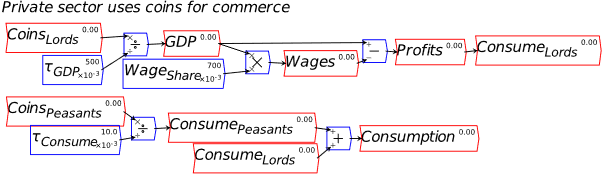

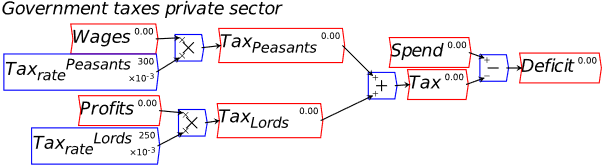

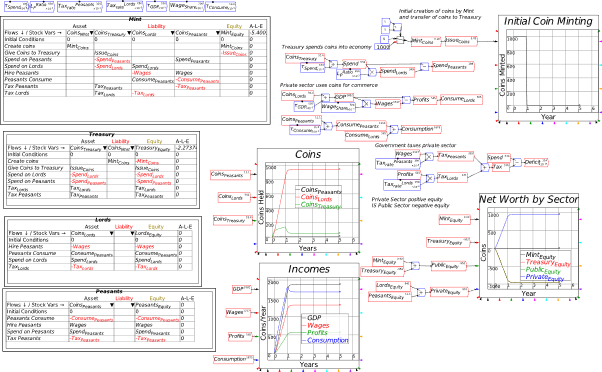

8.1 Modelling the Origins of Fiat Money in Minsky: pp. 33-39 of Manifesto 130

8.2 Modelling Modern Fiat Money in Minsky: pp. 39-50 of Manifesto 144

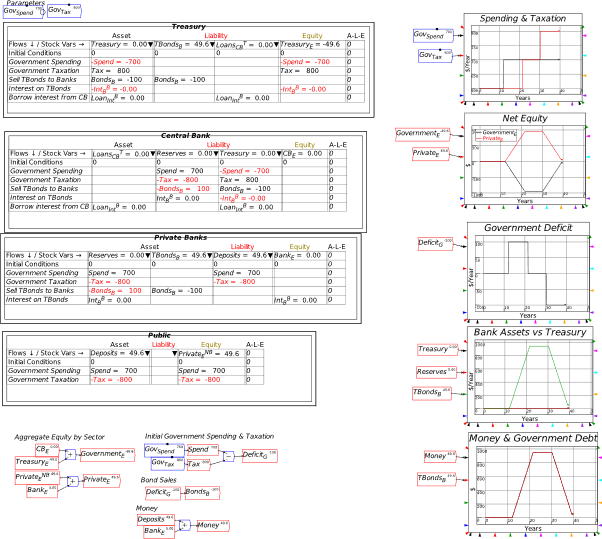

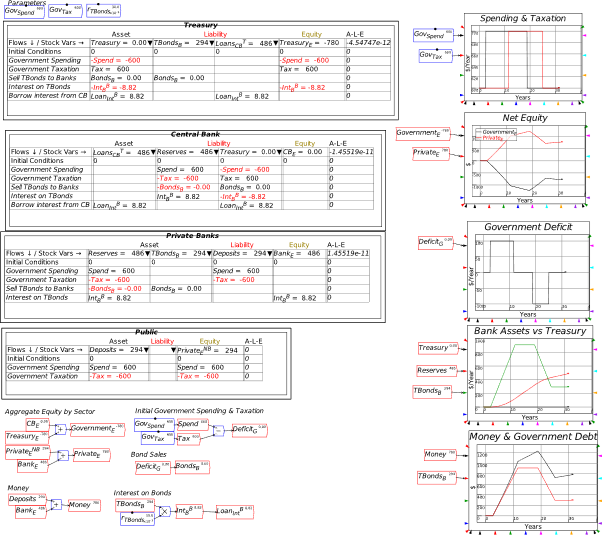

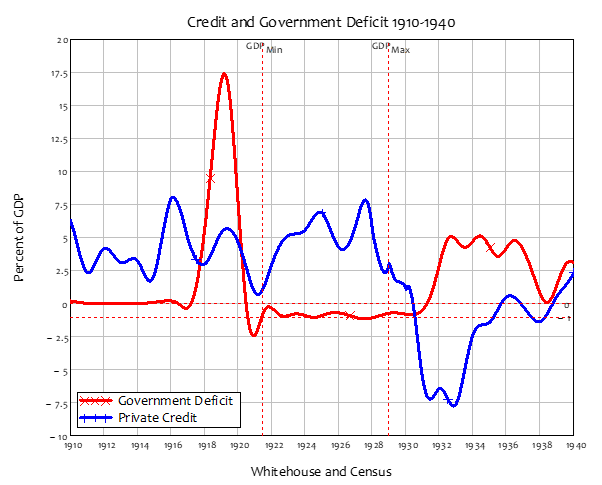

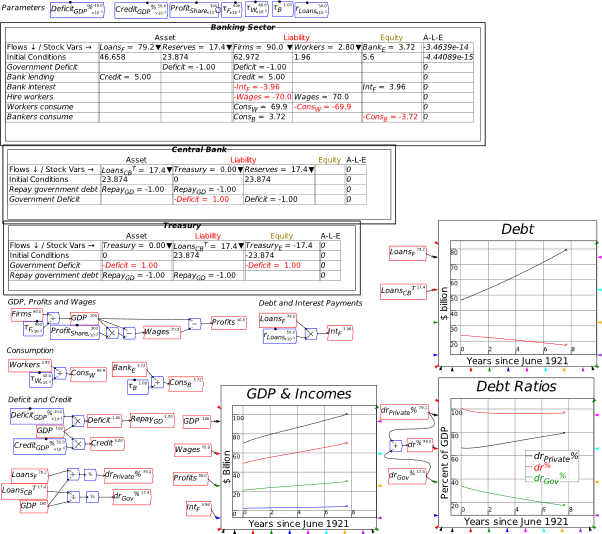

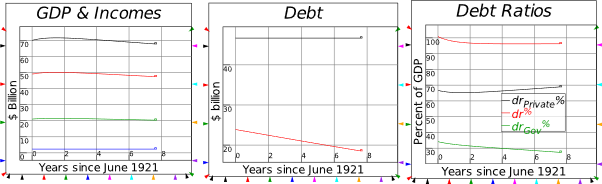

8.3 An integrated view of deficits and credit: pp. 59-65 of Manifesto 151

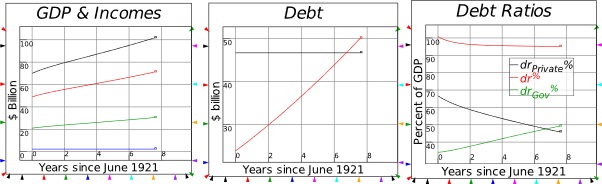

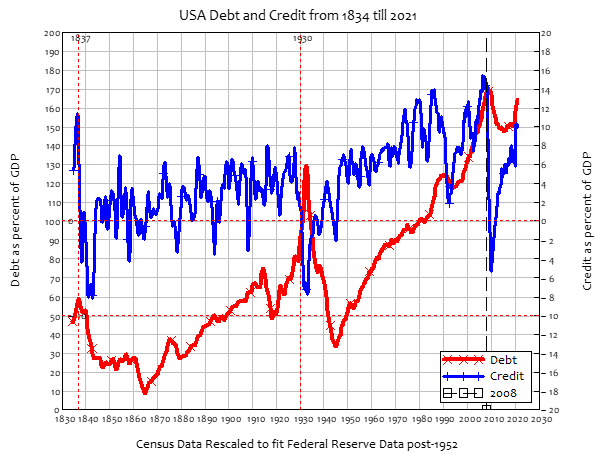

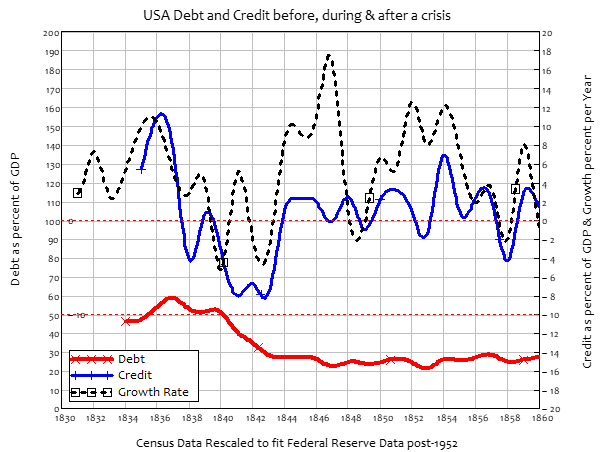

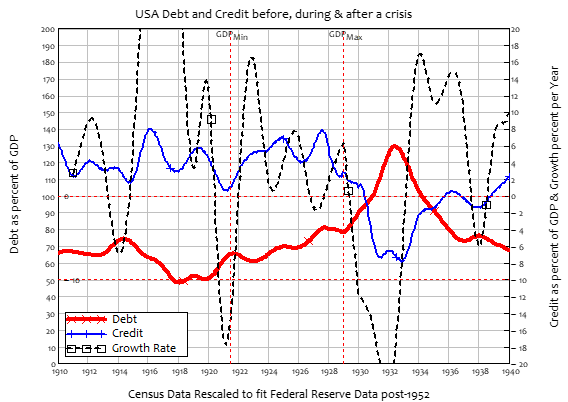

8.3.1 The actual event: Coolidge Surplus and private sector credit binge 161

8.3.2 Coolidge Surplus with no private sector borrowing 162

8.3.3 Coolidge Balanced Budget with the credit binge 162

8.3.4 Coolidge runs a Deficit with the credit binge 162

8.3.5 Coolidge runs a Deficit with no credit 163

8.3.6 Coolidge runs a Deficit to reproduce the 1920s boom without credit 163

8.3.7 Disentangling cause and effect 163

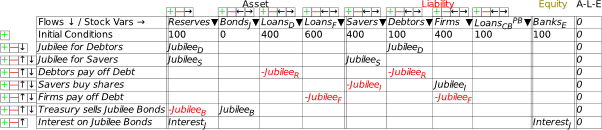

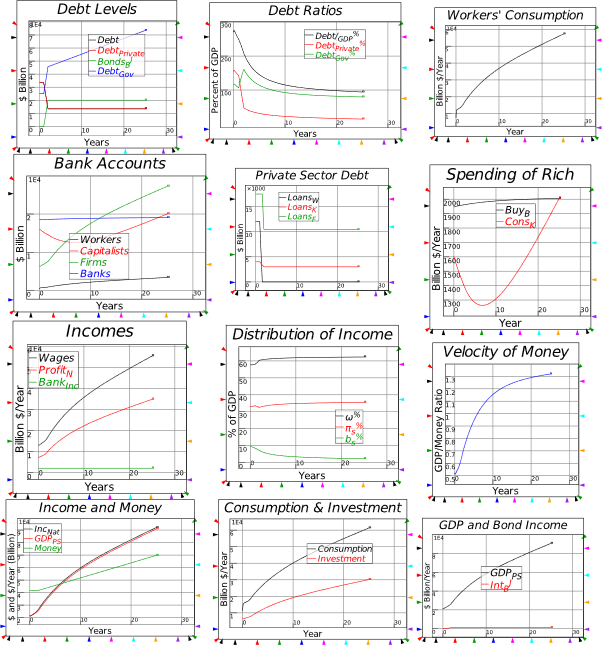

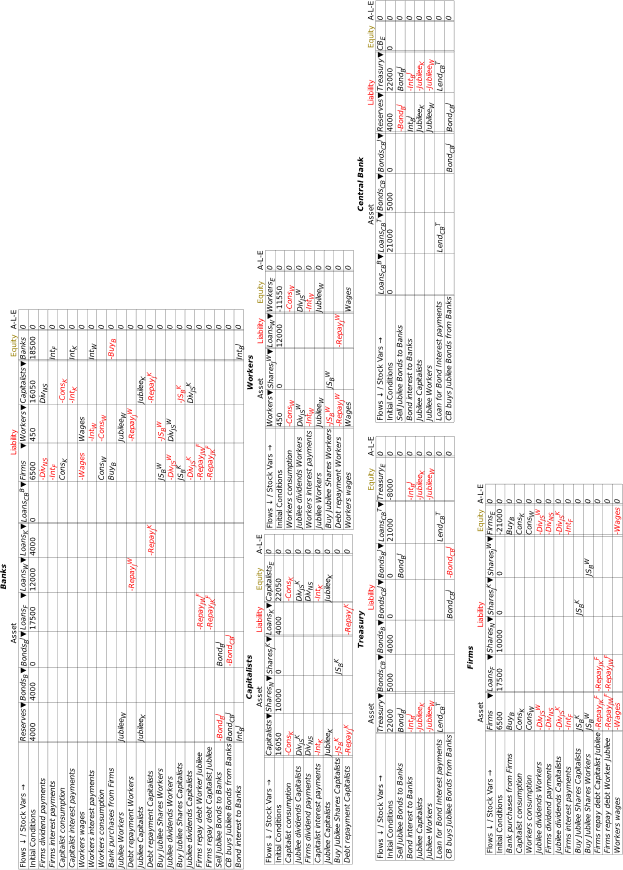

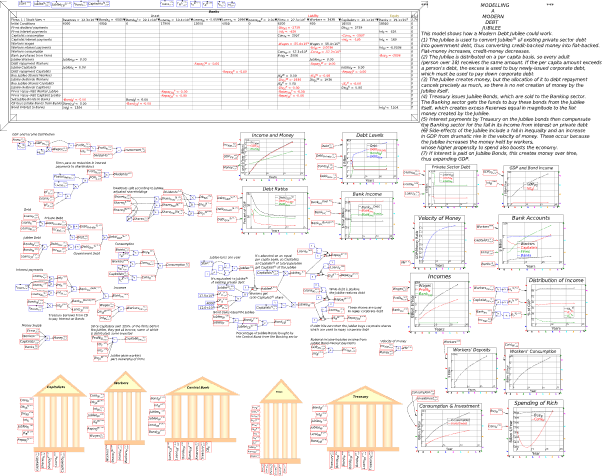

8.4 A Modern Debt Jubilee: pp. 65-68 of Manifesto 167

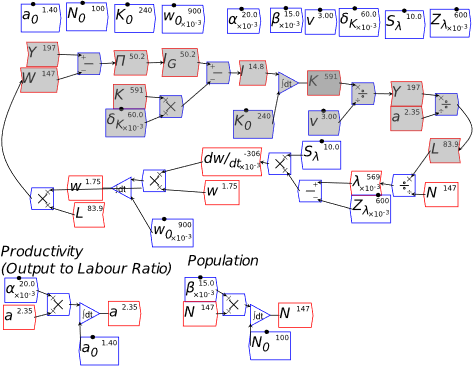

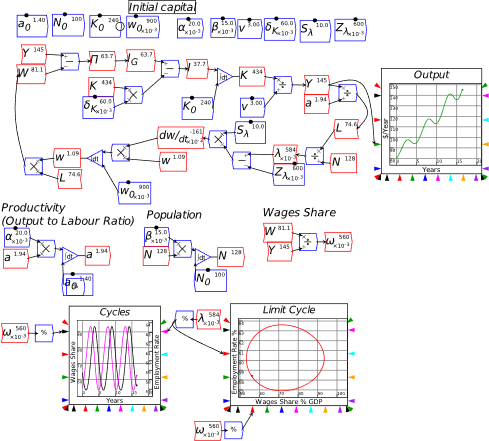

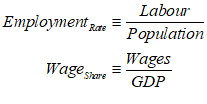

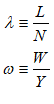

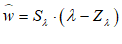

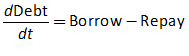

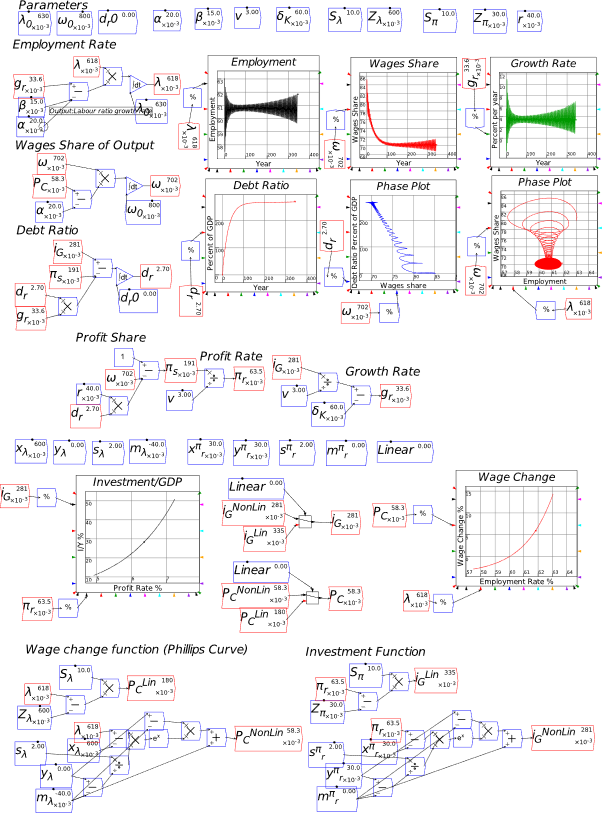

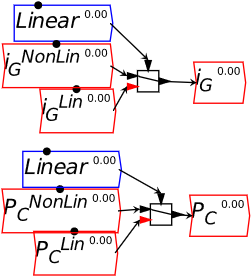

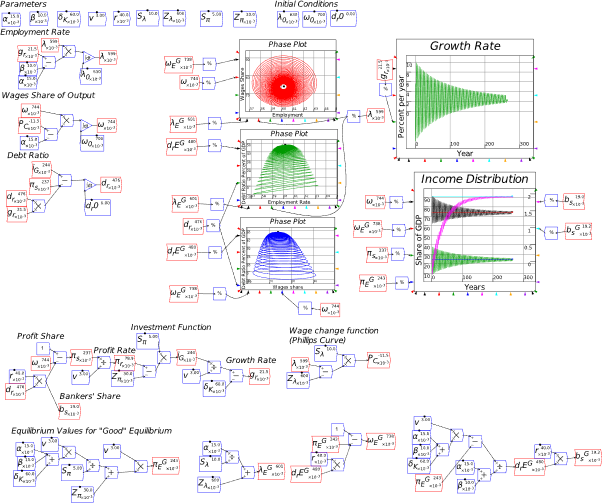

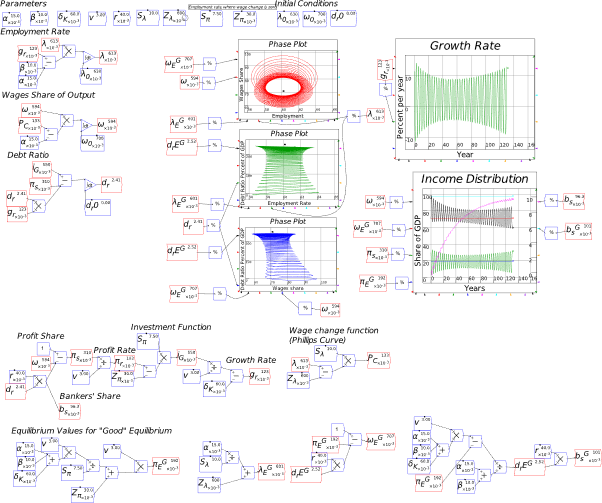

9.2 A complex systems model of economic instability 183

9.3 Nonlinear Functions in Nonlinear Models 189

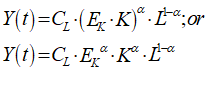

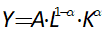

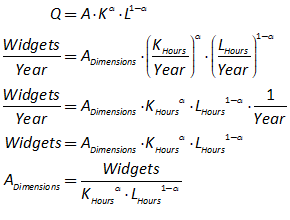

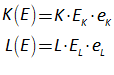

10.1 Forget the “Cobb-Douglas Production Function” (an optional read) 196

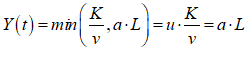

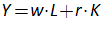

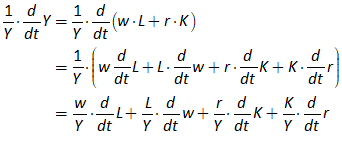

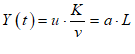

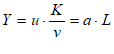

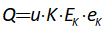

10.2 Generalizing the Leontief Production Function 199

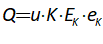

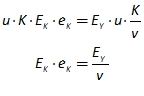

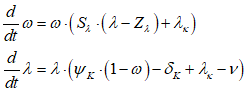

10.3 A Goodwin model with Energy 206

10.4 A Goodwin model with matter and energy 214

10.5 Derivation: constant technology and population 217

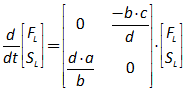

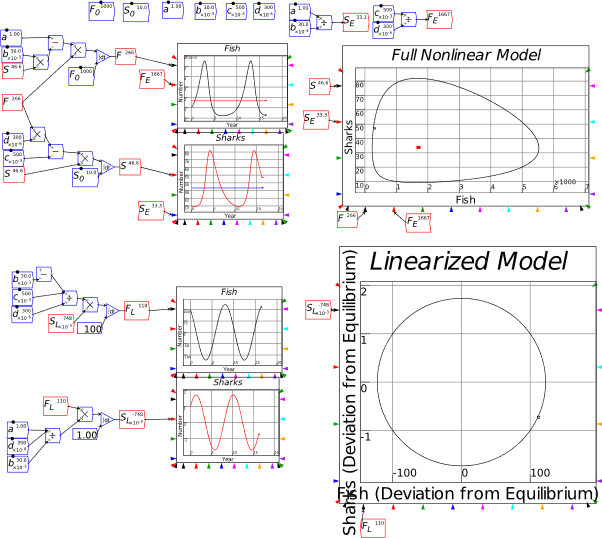

11.1 Of linearity and nonlinearity 226

11.2 The absolute basics of stability analysis 228

11.3 Analyzing a complex model 234

11.4 Analyzing the Keen model of Minsky’s Financial Instability Hypothesis 240

12 Model fitting notes from a non-statistician 251

14 Appendix: the credit dynamics of house prices 255

- Preface

I closed The New Economics: A Manifesto (Keen 2021) with an observation on the following remark by John Blatt in his brilliant book Dynamic Economic Systems (Blatt 1983):

At present, the state of our dynamic economics is more akin to a crawl than to a walk, to say nothing of a run. Indeed, some may think that capitalism as a social system may disappear before its dynamics are understood by economists. (Blatt 1983, pp. 4–5, emphasis added)

I noted that, when I first read this in 1991, “I thought it was a good piece of hyperbole. I now regard it as a depressingly prescient prediction”, because:

Given the role that Neoclassical economists have played in humanity making only trivial responses to the challenge of climate change to date, the social system that gets us through that challenge—if we do get through it—will be far more a command than a market economy. (Keen 2021, p. 204)

There is therefore a bitter irony in me releasing a book on dynamic modelling in economics that I hope would meet Blatt’s standards, right at the time that I expect his bleak prediction to start coming true. The forces humanity has unleashed, by ignoring the damage its production systems have done to the biosphere, will transform that biosphere into something almost certainly inimical to the survival of those production systems, and certainly inimical to their management by the disaggregated market system that is the hallmark of capitalism. Instead, if human civilization survives, it will be because of a centralized, coordinated command system, whose only antecedent is the War Economy of WWII.

What then is the point of show how to do dynamic modelling of a monetary production economy properly, when I expect that system to fail sometime in the next two decades, and probably sooner than later?

I see two immediate reasons. One is that, having failed to use systems thinking as the ecological crisis unfolded, we are going to need the capacity to think in a systemic way to manage our attempts to cope with the crisis. The second is that, unless an alternative paradigm develops in economics, the old ways of thought—the Neoclassical ways—will keep disturbing our thinking, even as we attempt to cope with the myriad crises which that way of thinking caused.

Minsky is far from complete: there are many ways in which I would extend the program, had I the funding to support it. But as it stands, it is capable of doing far more sophisticated modelling than is the norm in economics, and of supporting a way of thinking about the economy and the planet’s ecology that could have prevented us experiencing this crisis in the first place. I hope that this book will enable you to use not just Minsky, but also the integrated, systems way of thinking about the economy that should always have been the foundation of economics.

This manual is also incomplete: it lacks a chapter on fitting models to data, but with The New Economics: A Manifesto now published in the USA as well as the UK, I need to have this manual available immediately for those who wish to learn how to model in Minsky.

I will periodically post updates of this book to https://www.patreon.com/ProfSteveKeen and http://www.profstevekeen.com/minsky/.

- Introduction: A Manual with Attitude

Technically, this book is a free companion to The New Economics: A Manifesto (Keen 2021)—hereinafter referred to as Manifesto—with two main functions:

- To explain the Minsky models in Manifesto; and

- To be a manual for the Open Source system dynamics program Minsky, which features prominently in Manifesto.

In practice, it has developed a 3rd function: it’s somewhere for me to rant about issues that I didn’t get to cover in Manifesto. This was partly for reasons of lack of space: the publisher’s guidelines limited the book to just 25,000 words. But it’s mainly because the audience for Manifesto was very broad, whereas the intended audience for this book is very narrow: I want to reach young economists who wish to construct realistic dynamic models of capitalism.

The main rants are:

- That Bill Phillips (of the Phillips Curve) was a far greater economist—and person—than the mainstream economics caricature of the Phillips Curve has made him out to be;

- That economists should stop modelling in “discrete time” or periods, and instead should model in continuous time, using differential equations; and

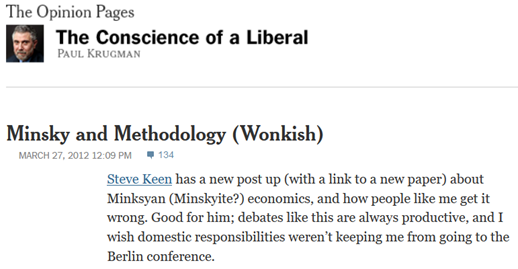

- That “Loanable Funds”, Paul Krugman’s preferred model of banking, is utterly misleading about the role of banks, debt and money in macroeconomics.

There’d be a lot less text to read if I treated this as a standard “how to” manual for Minsky itself, which is what I would do if Minsky were just another, better way to do what you—an established or nascent economic modeler—are currently doing with different tools. But that’s not the case, even for Post Keynesian economists who are currently working in the Godley and Lavoie stock-flow consistent tradition. For those who’ve only been exposed to or developed Neoclassical models, whether that’s the old-fashioned IS-LM and AS-AD models, or the currently dominant practice of DSGE modelling, Minsky does not and indeed cannot do what you currently do—and I want to persuade you that this is a good thing.

Minsky is a system dynamics program. It works only in continuous time—not “periods”: see section 6.2 (starting on page 67) for the rant about why Minsky does not use “periods”—and it is designed to model systems that operate far-from-equilibrium, rather than systems that are assumed to return to their equilibrium values after an exogenous shock. Its unique feature, the “Godley Table”, enables the correct modelling of monetary dynamics, which are absent from mainstream economic models. Each of those facets of the program, and several others, reflect the needs of an approach to modelling that I believe is superior to the dominant methods in both Post Keynesian and Neoclassical economic modelling.

The key foundation here is its use of system dynamics. “System” means an interacting set of factors that cannot be understood in isolation from one another. This puts it in the category that Neoclassicals call “general” as opposed to “partial”. But for Neoclassicals, “general” goes hand in hand with “equilibrium”, whereas the second word in the phrase “system dynamics” transcends “equilibrium”. An equilibrium is a state that a system can be in, but, in all likelihood, the interacting forces in a system will be such that its equilibria are unstable, so that they therefore describe conditions that the system will never display.

System dynamics models in general demonstrate the behaviour of a system of equations that the modeler believes mimic the behavior of a real-world system which changes over time. There are many other system dynamics programs out there—Stella, IThink, Vensim, Modelica, AnyLogic, Matlab’s Simulink, Wolfram’s System Modeler, Insight Maker, to mention but a few. What distinguishes Minsky from the pack are its “Godley Tables”, which are designed to make it easy to model the dynamics of monetary systems. System dynamics itself can be challenging, and it involves many concepts that may be foreign to you when you first use such a program. But Godley Tables are easy: if you have used a spreadsheet, you can design a model of a monetary system using Godley Tables.

Minsky is also Open Source, which means that (a) it is free and (b) its source code is available for anyone for anyone to inspect and, if they wish, modify.

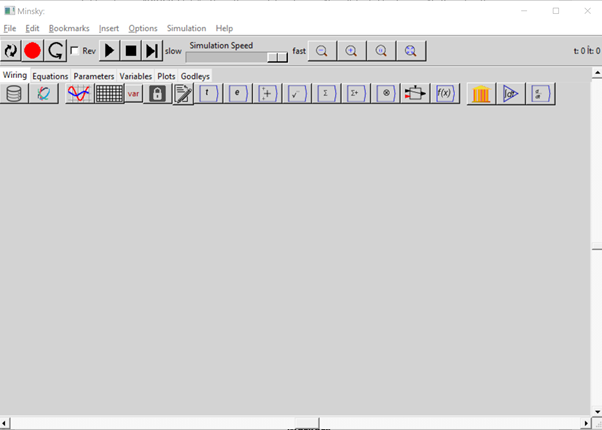

To use Minsky, you first have to download it from one of its online repositories. The main site is SourceForge (https://sourceforge.net/projects/minsky/), and it is also available for more technically savvy users at https://github.com/highperformancecoder/minsky. The SourceForge system recognizes the operating system of the computer you’re using to access SourceForge, and delivers the appropriate version: Windows builds for windows users, Mac builds for Mac users and source code tarballs for anyone else. For most popular Linux distros, a prebuilt packaged Minsky is available from the OpenSUSE Build Service (OBS):

In this manual I exclusively use the Windows version of Minsky, which, at the time of publication of this book (31st December 2021), is version 2.35. This version has a vast number of improvements over the previous release, thanks to a £200,000 grant from the Friends Provident Foundation of the UK. The next major release—expected sometime in January 2022—will implement Minsky’s user interface in Javascript, rather than its current GUI of Tcl/Tk.

Pre-release versions of Minsky are made available as “betas”, to enable users to test extensions to the program out before a final release. User feedback is an essential part of the software development process, and we’d be delighted to have you help us out by testing the new versions, reporting bugs, suggesting improvements to features, etc. If you’d like to assist us in this work, please download MinskyBeta from https://sourceforge.net/projects/minsky/files/beta%20builds/, and sign up to the beta-testers program at https://sourceforge.net/p/minsky/mailman/. Both release and beta versions of Minsky can be installed at the same time.

- Installation

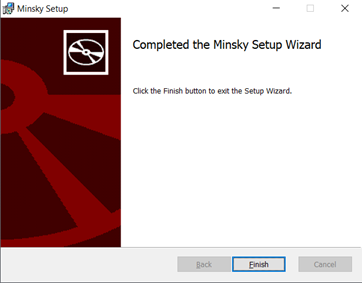

To install Minsky on a Windows PC, double click on the MSI (“MicroSoft Installer”) file that you have downloaded from SourceForge. This will bring up the dialog box shown in Figure 1.

Figure 1: Installer dialog box for Minsky

Click on “Next” and you will see the license agreement dialog box:

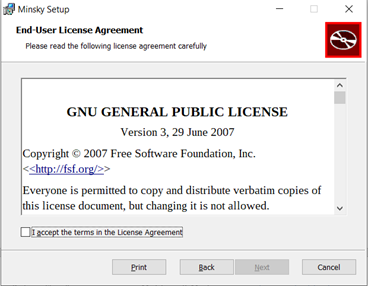

Figure 2

Click on the “I accept the terms in the License Agreement” checkbox (this is a standard Open-Source license, involving no user fees) and the Next button will become available. Click on it, and the install destination dialog box appears.

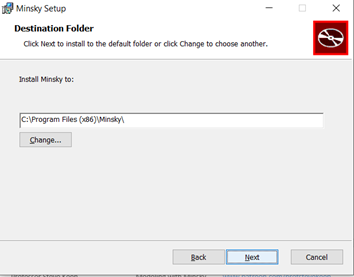

Figure 3

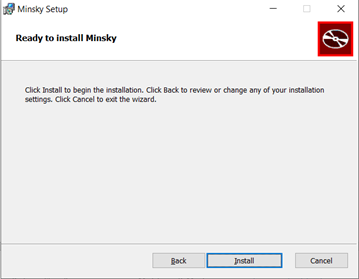

Figure 4

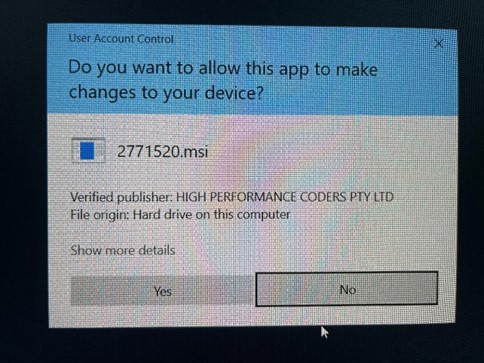

Click on Install, and after a short delay, you screen should go blank, except for the form shown in Figure 5. Click on “Yes”, and the installation will commence.

Figure 5

When it finishes, you have one more dialog box to contend with—see Figure 6.

Figure 6

You are now ready to use Minsky. Press the Windows key on your keyboard to bring up the main Windows menu (or the equivalent on a Mac or Linux box), choose Minsky, and you’re ready to delve into the world of system dynamics and monetary modelling.

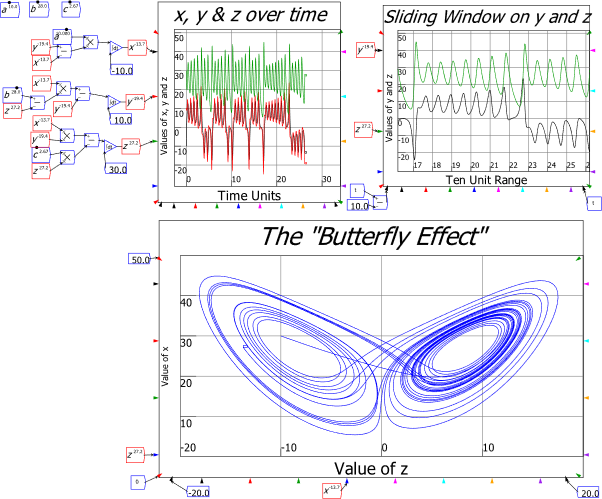

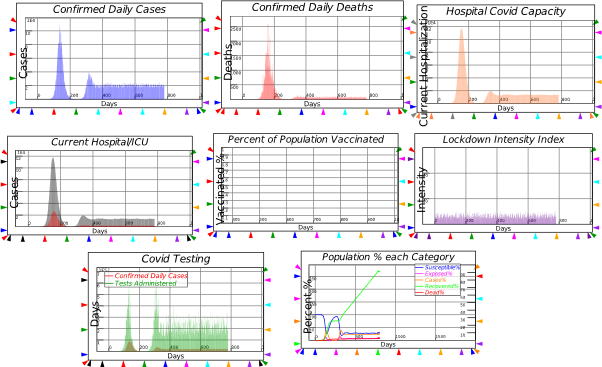

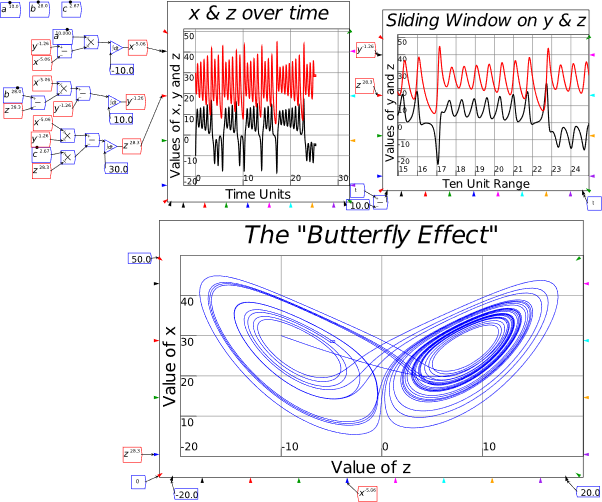

I urge you to not just read this book, but also to build the models in it yourself as you read it. Have this book open, physically or on screen, with Minsky on your computer, and create the models as you read. Ideally, you would be doing this in a workshop with a tutor guiding the process—something I used to do with my students at Kingston University. Especially now in the age of Covid, this isn’t possible—so it’s up to you to follow the instructions in this book, and then replicate them in your own Minsky models on your computer. You will doubtless make mistakes. But you will learn from mistakes and ultimately learn how to use Minsky to learn economics, and to create models of your own, for your own purposes. This can range from just the fun of being able to simulate chaotic systems—see Figure 7—to building a robust, large-scale model of a national economy.

Figure 7: The Lorenz model of turbulent flow in Minsky

You will also almost certainly encounter bugs, ranging from minor annoyances—such as, at present, text boxes for plots running outside the plot boundaries—to fatal crashes, where the program hangs and suddenly you find yourself staring at the desktop. There will, hopefully, be very few of the latter—the funding that we received from Friends Provident Foundation in 2018 has allowed us to dramatically improve the program’s stability, as well as to add numerous features. But they will happen nonetheless: bugs are a given in any computer software.

If you find a bug, please report it to the beta-testers list, which you can find at https://sourceforge.net/p/minsky/mailman/. The user groups that exist there are:

- minsky-betatesters: Subscribe | Archive | Search — A list for people who’d like to test beta versions of Minsky

- minsky-developer: Subscribe | Archive | Search

- minsky-users: Subscribe | Archive | Search — For topics related to general usage of Minsky

If you plan on being an active user of Minsky, please sign up to at least minsky-users and minsky-betatesters. In the former you can get feedback and advice from other users; in the latter, you can report bugs (or feature requests) that will enable us to improve Minsky over time.

Finally, consider signing up to Minsky‘s page on Patreon, https://www.patreon.com/HPCODER. The minimum signup amount is US$1 per month (plus sales or value added taxes, which vary from country to country). Ideally, this would provide sufficient funds to enable Minsky‘s programmer Dr Russell Standish to work full-time maintaining and extending Minsky. At present (October 2021), it raises a small amount—$520 a month from 86 patrons. This at least covers Russell’s costs in producing compiled versions, which take about 4 hours to generate, but it’s a long way short of enabling him to work full-time on Minsky itself. I would prefer to see twenty times the revenue coming in, and forty times the number of Patrons. If you like using Minsky, and if this manual teaches you anything at all, please help make that wish come true.

- Understanding money: “Minsky for Dummies”

This is a very technical book, for the simple reason that it explains how to build economic models using a mathematical program. But a lot can be done with Minsky without doing mathematical modelling, because many of the issues in dispute these days in economic theory and policy come down to “How are you going to pay for it?”

To really answer that question, you have to understand the dynamics of our monetary system—and that means you have to understand double-entry bookkeeping, because that’s the way banks and governments create money, and keep track of financial transactions. Minsky was built to do that, with its unique feature of “Godley Tables”. You can use the Godley Tables alone to answer many of the questions that dominate political debate today:

- Is there a “magic money tree”?

- Do banks create money?

- What are Reserves used for?

- What do taxes do?

And so on. In this chapter I’ll show how to pose and answer questions like these using Minsky, without having to write a single equation. Instead, I’ll just use the unique feature of Minsky, its “Godley Table” (see Figure 8).

Figure 8: The “Godley Table” icon

You can place a Godley Table on Minsky‘s design canvas in two ways:

- By choosing “Insert/Godley Table” from the Insert menu; or

- By clicking once on the Godley Table icon on the toolbar (see Figure 9), and then clicking again to place the icon somewhere on the canvas.

- You may be used to using “click and drag” to insert objects in other programs, like Paint, This doesn’t work in Minsky—instead you click once to attach an object to the cursor, and then a second time to place the object somewhere on the canvas.

- At some stage in the future we might support both “click, then click and place” and “click and drag”, but for the moment, we only support the first method.

Figure 9: Minsky’s Toolbar, with the Godley Table icon the 3rd from the right

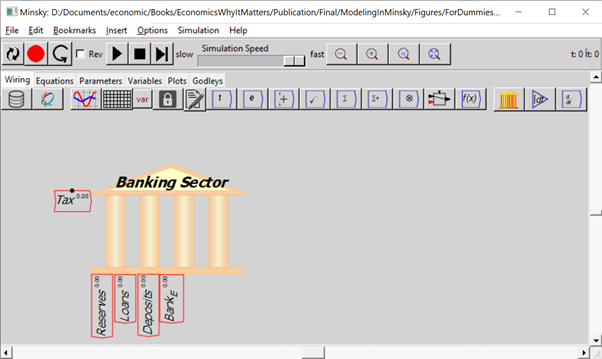

Once you’ve inserted the Table on the canvas somewhere, it will look like Figure 10.

Figure 10: Minsky’s canvas with a single Godley Table

To use the Godley Table, either double-click on the icon, or click on your right-mouse-button and choose “Open Godley Table” from the menu—see Figure 11.

Figure 11: The right-click (context-sensitive) menu for a Godley Table

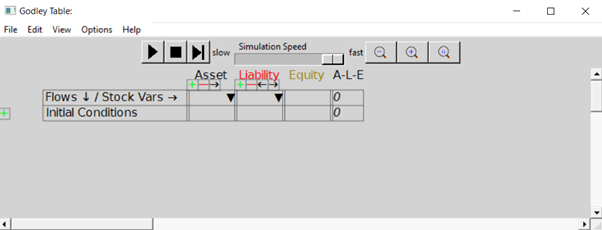

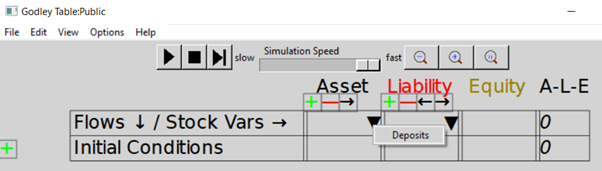

That will bring up a new window for editing the Godley Table—see Figure 12. This is a free-standing Windows/Mac/Linux window, so you can switch between it and the canvas using Windows commands and their Mac and Linux equivalents (Alt-Tab is the keyboard shortcut to move between windows in Windows). You can have multiple Godley Table windows open at once, as well as the window for the main canvas.

Figure 12: A Godley Table open for editing in its own window

The top row of the Table has tools for running a model, and for zooming in and out on the Table itself. The most useful tools at this stage are the magnifying glasses, which let you zoom out, zoom in, or set the size of the Table to its default. Figure 13 shows the same blank table as in Figure 12 after six clicks on the zoom in tool.

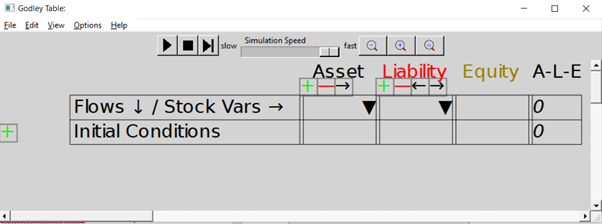

Figure 13: A magnified view of a Godley Table

The next row shows that all accounts in a Godley Table have to be classified either as an Asset (a claim that you have on someone else), a Liability (a claim that someone else has on you), or Equity (the gap between Assets and Liabilities).

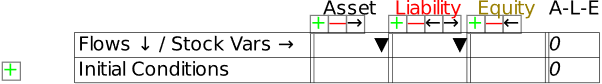

The Table starts with room for just one Asset, one Liability, and one Equity column, but of course a significant model is going to have more than one of each. That’s what the symbols on the next row are for: the

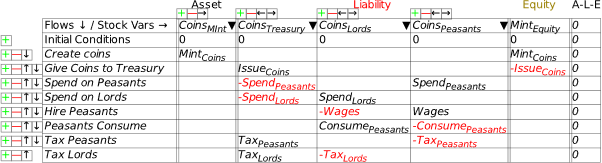

adds a new column, the

deletes an existing column, and the symbols move a column to the left or right. Now let’s build a simple model that, without the need for any equations, will show how a modern monetary system works.

- Fiat Money

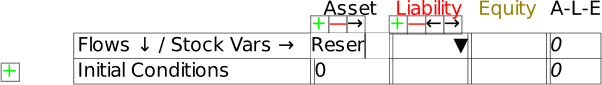

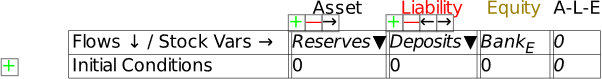

To use the table, you first have to name the Assets, Liabilities and Equity columns on the table. That’s where the next row—which starts with “Flows ¯¤ Stock Vars ®”—comes in. If you click in one of the cells on that row and start typing, you are providing a name for one of the stocks in the Table (ignore the upside-down triangle there for now—we’ll come to that soon). In Figure 14, I’ve clicked in the Asset cell and I’ve started to type the word “Reserves”. Once I press the Enter key (or click outside the cell using the mouse), I’ve defined the stock “Reserves”.

Figure 14: Naming a stock in a Godley Table

Click in the cell below Liability and enter “Deposits” (without the inverted commas of course!), and in the cell below Equity, type Bank_E. The underscore tells Minsky to subscript the next character, so when you press Enter, or click outside the cell, the program will display BankE in that cell (the subscript stands for “Equity”). When you’re finished, you’ll have the basic elements of the simplest possible model of banking—in fact, one that’s too simple, because it doesn’t include the key thing that defines a bank, its capacity to make loans.

Figure 15: A basic Godley Table with 3 stocks: Reserves, Deposits and BankE

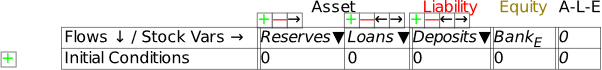

We’ll add that by using the

key below the Asset heading. That creates an additional blank cell next to Reserves. If you type “Loans” into this cell, you have Figure 16: the starting point for understanding our monetary system: a banking sector with the Assets of Reserves and Loans, the Liability of Deposits, and the difference between them, the banking sector’s equity BankE—which must be positive, since one rule of banking is that a bank must have more Assets than Liabilities.

Figure 16: The minimum stocks to show the credit and fiat money roles of banks

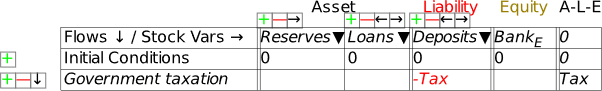

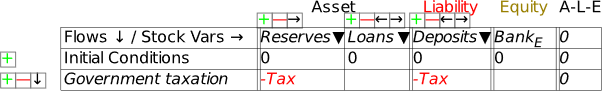

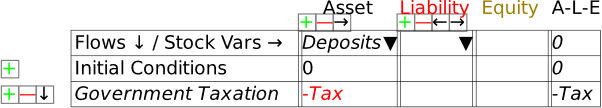

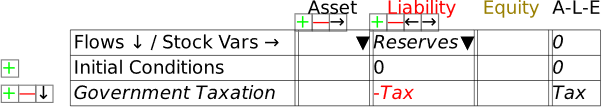

If we were going to build a simulation model, then the next row would be critical: this shows the initial amounts in the various accounts. But since this chapter is about using Minsky without building a simulation, we’ll skip over it and instead click on the key at the beginning of the row. This adds a row for recording a financial transaction. Let’s start with government taxation, using the name “Tax” as a placeholder for the flow of money out of Deposits. If you type “” into the cell beneath Deposits, you’ve recorded what taxation does—it takes money out of the bank accounts of the public. At this stage, Minsky lets you know that your entry isn’t complete, because you have only one entry for Tax, when every financial operation requires two entries per row.

Note: One limitation of Minsky at present is that entries in the rows must be variables—words like “Tax”, “Spend”, etc.—rather than numbers like “900”, “1100”, etc. This is because Minsky was developed to build dynamic equation, and the entries in those equations are variables rather than constants.

Figure 17: Taxation entered as a deduction from Deposits

To complete this row, you need to add another entry so that the “Fundamental Law of Accounting”—that —is enforced. It should be obvious that the correct thing to do is to add another “” to the Reserves column as well: it doesn’t make sense to do insert in the Loans column (which we’ll model in the next section), or to Bank Equity. Therefore, taxation reduces not only Deposits, but also Reserves (Figure 18).

Figure 18: A fully entered double-entry bookkeeping record of taxation

Once you’ve made these entries in the Godley Table, your canvas should look like Figure 19 (where I’ve also used the “Title” menu item on the right-click menu to name this table “Banking Sector”). The stocks you’ve defined in the table are shown at the bottom of the icon; the single flow that you’ve defined is shown on the left-hand side.

Figure 19: A Minsky model with the Godley Table shown in Figure 18.

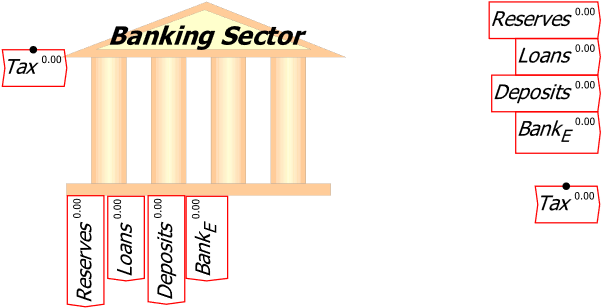

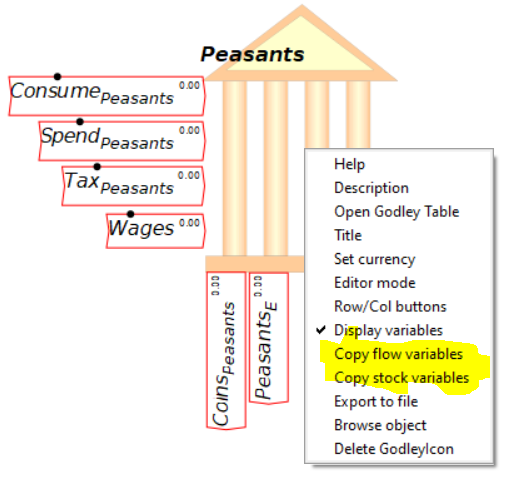

If you were defining an actual model right now, the next stage of the process would be place the mouse over the icon, and use the right-click menu to “Copy stock variables” and “Copy flow variables”. Figure 20 shows the model with both operations done, and the stock variables (Reserves, Loans, Deposits and BankE) placed on the canvas. Then you’d go about defining the flows using the stocks, additional parameters and variables, etc. But we’ll leave that for now and just continue using Minsky’s capability to model the interlocking financial assets and liabilities that define a monetary economy.

To make the canvas less cluttered, I’m going to use the right-click menu to turn off display of these variables: the option “Display variables” is ticked by default, and a click on that turns it off so that all you see is the bank icon itself.

Figure 20: The stock and flow variables copied and placed on the canvas

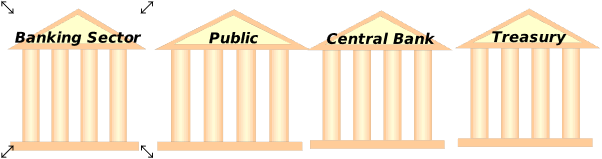

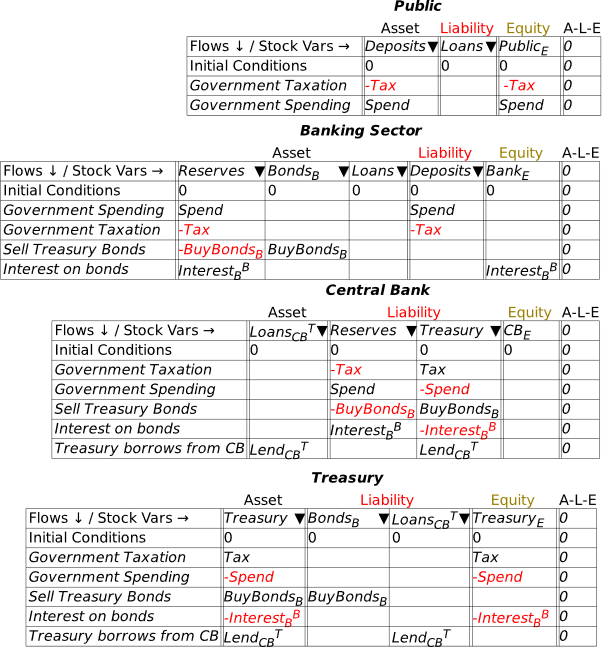

At this stage, we’re simply seeing the financial system from the point of view of the banking sector. A complete model involves seeing it from all perspectives, including here the public (where Deposits, which are a Liability of the banking sector, are an Asset of the public), the Central Bank (since Reserves, which are an Asset of the banking sector, are a Liability of the Central Bank), and the Treasury (which is the originator of the taxation operation).

To do this, we need to add an additional three Godley Tables—one each for the Public, the Central Bank, and the Treasury. In Figure 21, I’ve named them all appropriately using the Title option on the right-mouse menu (you can also name a table when working on the Godley Table itself: “Title” is an option on the Edit menu, and the right-click menu also has a Title option).

Figure 21: The model with 3 more blank Godley Tables

To populate these tables, we make use of one feature I haven’t yet explained, the upside-down triangle or wedge , in the cells for naming stocks. If you click on one of these wedges, Minsky returns a list of all the Liabilities (or Assets) that haven’t already been recorded as an Asset (or Liability) for some other entity in the model.

To populate these tables, we make use of one feature I haven’t yet explained, the upside-down triangle or wedge , in the cells for naming stocks. If you click on one of these wedges, Minsky returns a list of all the Liabilities (or Assets) that haven’t already been recorded as an Asset (or Liability) for some other entity in the model.

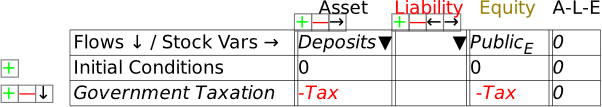

Open up the Godley Table for the Public and click on the wedge in the Asset cell, and one entry will appear in a drop-down menu: Deposits—see Figure 22.

Figure 22: Using the Assets and Liabilities Wedge

Click on Deposits to choose it, and Minsky will show Deposits as an Asset of the Public, and auto-populate the column with all the operations that have been entered on the Banking sector table that affect Deposits—so far, this is only the negative entry for taxation. This gives us Figure 23. Notice that the A-L-E column has the entry in it, showing that the matching double-entry for this table hasn’t yet been entered.

Figure 23: Deposits as an Asset for the Public

The only sensible option here is that taxation reduces the equity position of the non-bank public sector. Name the Equity cell PublicE, add the entry “” on the Government Taxation row, and this operation is now shown from the public’s perspective: taxation takes money out of the public’s bank accounts, and reduces its equity. This is a fundamental proposition in MMT—Modern Monetary Theory—and it’s obviously true, when you see the world through the double-entry bookkeeping eyes of Minsky.

Figure 24: Taxation shown from the public’s point of view

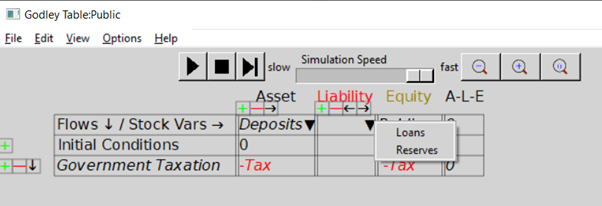

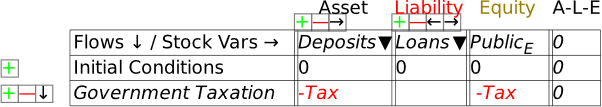

Next, let’s add the public’s liability in this model—loans from the banking sector. If you click on the wedge under Liabilities, the drop-down menu will reveal two choices: Loans and Reserves. Click on Loans, and you’ll get Figure 25. We’ll add flows to the Loans column in the next section—in this one we’re focusing on government operations.

Figure 25: Auto-populating the Public’s Liabilities

Figure 26: The Public’s Godley Table completed

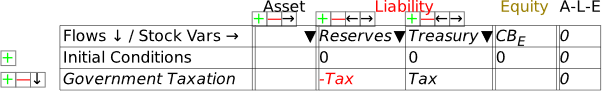

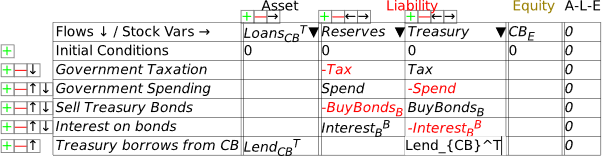

That’s taken care of Deposits, which is shown as a Liability of the Banking Sector and an Asset of the Public. Now we must do the same for Reserves. These, as is well known, are a Liability of the Central Bank: in effect, Reserves are the deposit accounts of private banks at the Central Bank. Open the Central Bank’s Godley Table, click on the wedge in the Liabilities cell, and choose “Reserves”. That generates Figure 27.

Figure 27: The Central Bank Godley Table with Reserves entered

As with the earlier exercise with the Public’s table, we have just a single entry for Tax: there’s nowhere obvious to record it a second time, since it’s not the Central Bank that does the taxing, but the Treasury. Therefore, the sensible thing to do here is to add an additional Liability for the Central Bank, the deposit account of the Treasury—which I simply call Treasury (see Figure 28). I’ve also named the Equity column for the Central Bank CBE.

Figure 28: Treasury account added to Central Bank Godley Table

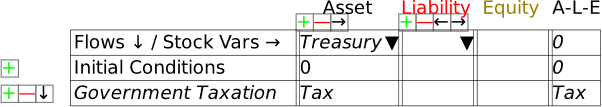

This now gives us a Liability for the Central Bank—the Treasury’s account—which is an Asset for the final entity in this model, the Treasury itself. Bring up the Treasury’s Godley Table, click on the wedge for Assets, choose “Treasury”, and you’ll have Figure 29.

Figure 29: The Treasury’s Godley Table on initial entry of its Asset, the Treasury account at the Central Bank

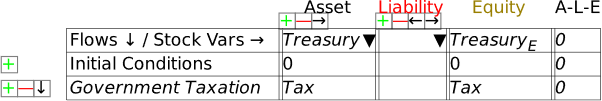

To complete the model at this stage, you need to enter the balancing entry for Tax—and the obvious place for it to go is in the Equity column for Treasury: taxation increases the Equity of the Treasury (Figure 30). This is the obverse side of the MMT point that “the Public sector’s surplus is the Government sector’s deficit”: taxation subtracts from the Equity of the public and increases the equity of the government.

Figure 30: Treasury Equity shown

This is also the point at which a genuine Fiat money system differs from a commodity-backed system—a “Gold Standard”, for example—or from one like the Eurozone, where national treasuries cannot produce the currency they spend. In such systems, Tax would add to the Treasury’s stock of Gold (or Euros), while government spending—which I’ll introduce shortly—would run that stock down.

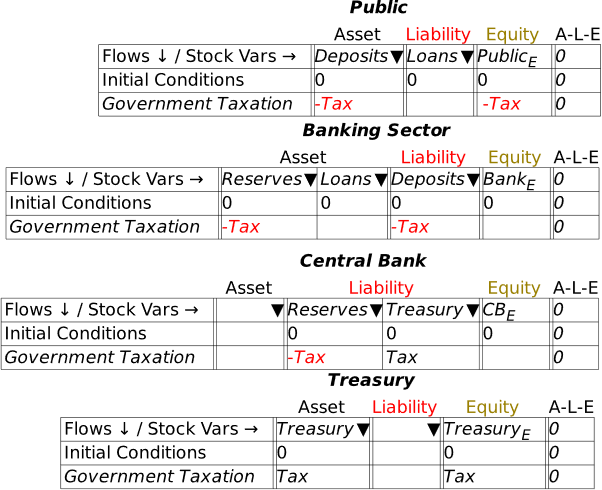

We now have a complete model of the impact of taxation in a Fiat money system, in that every Asset is shown as another entity’s Liability, and all flows are recorded four times: twice in each table they appear in, and once each as affecting an Asset and a Liability. Via double-entry bookkeeping, this gives us eight entries for the one operation.

To see this whole system, click on the Tab labelled “Godleys”, and you’ll see all the Godley Tables at once. They’ll be a jumble when you first click on the tab, but you can easily move them around to produce an arrangement like Figure 31.

Figure 31: The complete basic model, with all four Godley Tables

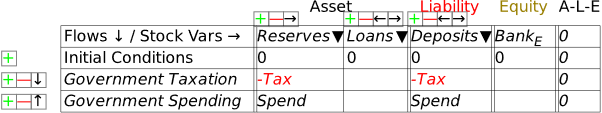

We can now add government spending to the model, and it’s effectively the opposite of Tax: government spending increases the public’s equity and reduces the government’s. You can start anywhere you like in the system—from the Public’s Godley Table, or the Treasury’s, Central Bank or the Banking Sector—and Minsky will point out where the matching entries are needed. I started with the Banking Sector’s view in Figure 32:

Figure 32: Adding government spending into the model

A minute or so later, I had the picture shown in Figure 33.

Figure 33: The complete model with government spending as well as taxation

This lets us see the key points of Modern Monetary Theory—not because I’ve been explaining the theory itself, but because the “theory” is fundamentally on an accurate portrayal of the accounting. A government deficit creates net financial assets for the public, and simultaneously creates negative net financial assets for the government: the government deficit is the private sector surplus.

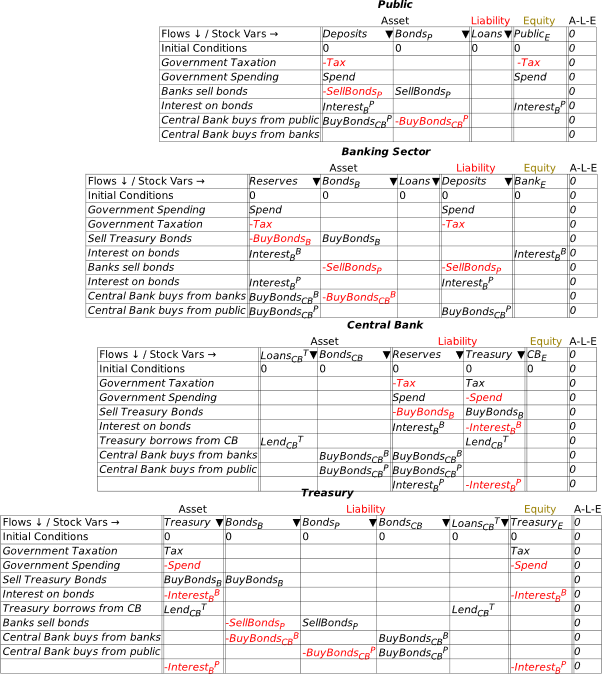

To complete the picture of a modern fiat money system, we need to include bond sales by the Treasury to the Banking Sector in the initial auction, sales by the Banking Sector to non-banks (which can include other financial institutions, such as Pension and Hedge Funds), and purchases by the Central Bank of bonds from both the Banking Sector and the Public.

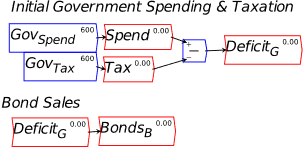

- Bond Sales

In the model to date, if the Treasury spends more than it taxes, the Treasury’s account at the Central Bank will go into overdraft—notice in Figure 33 that the only flow entries in the Treasury’s account at the Central Bank are and . So if is greater than , then over time, this account will turn negative.

For an ordinary customer of an ordinary bank, that’s a serious problem. A negative deposit account might not be approved in the first place—so that any intended transaction which sends an ordinary depositor’s account into negative territory would be rejected for insufficient funds. If an overdraft is approved by the bank, it attracts a punitive interest rate, normally higher than the interest rate on loans themselves. If the customer breaches the terms of the bank’s overdraft—by not making an interest payment, or breaching any of the many caveats that a bank can attach to an overdraft agreement—it can lead to the bank initiating bankruptcy proceedings against its customer.

But what is the situation for the Treasury and the Central Bank? In a country which issues its own currency, the Treasury is the effective owner of the Central Bank. Though specific laws can change the situation, technically, the Central Bank is obliged to let the Treasury do what it wants, even if that means a negative balance on the Treasury’s account at the Central Bank. It would be quite possible, in an accounting sense, for the government to simply operate with an overdraft account at the Central Bank: it doesn’t have to sell bonds at all.

However, most countries have passed laws forbidding the Treasury from operating in overdraft mode, except in exceptional circumstances like the pandemic—where, for example, the Bank of England initially allowed Treasury to operate its overdraft account, rather than having to sell bonds to avoid going into overdraft. Some countries also require the Central Bank to charge the Treasury interest on either overdrafts or loans. But even in countries which do that, the interest is returned to the Treasury as part of the operating profits of the Central Bank. This is why there is a “magic money tree”: a currency-issuing country can create money by running a deficit, and it does not have to borrow from either private banks or the public to finance that deficit.

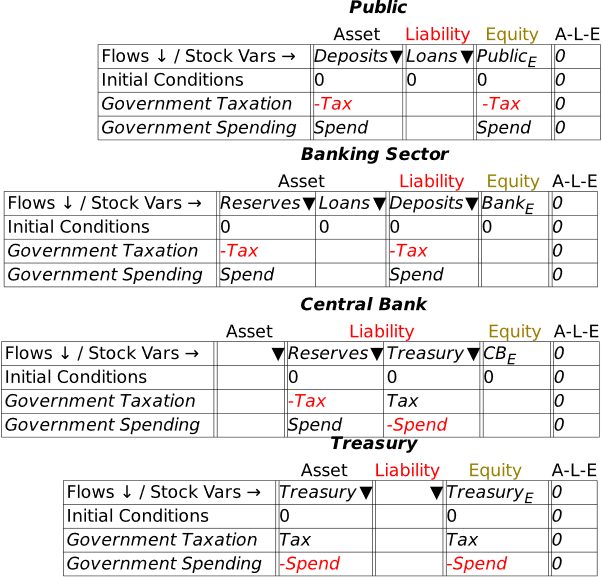

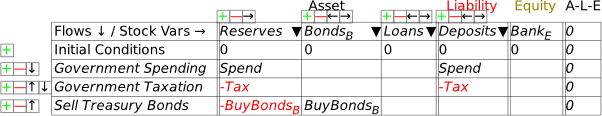

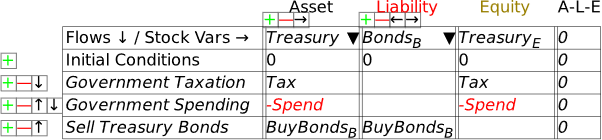

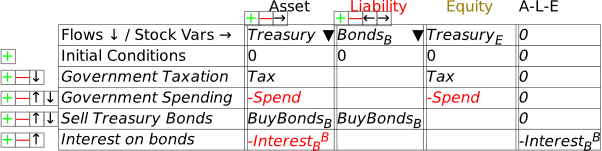

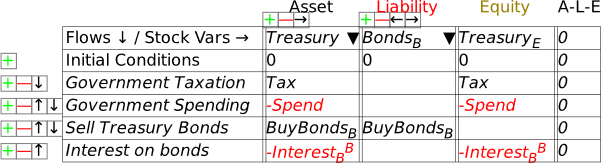

What do bond sales in fact do? Let’s add them to the model and find out. This requires one more Asset column for the Banking Sector, which is the sector that initially purchases Treasury Bonds. I’ve named the Asset for Banks BondsB, to indicate that these are Bonds owned by the banks—rather than, say, the Central Bank or the Public—and labelled the transaction BuyBondsB in Figure 34.

Figure 34: Banks buy Bonds from the Treasury

That’s showing the increase in the Banking Sector’s Assets from buying the bonds, but how do they finance the purchase? In other words, where is the second entry required by double-entry bookkeeping to show the purchase? The only viable option is that the funds used to purchase the bonds come from Reserves—and these Reserves were created by the deficit: the excess of Spend over Tax. So, as well as creating money for the private sector, the deficit creates excess Reserves, which the Banking Sector uses to buy the bonds. As long as the value of bonds sold be Treasury is equal to or less than the deficit, the Banking Sector has the Reserves needed to buy them: see Figure 35.

Figure 35: Bond purchase balanced by showing bonds are bought using Reserves created by the deficit

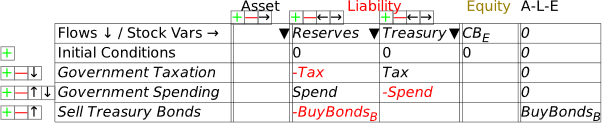

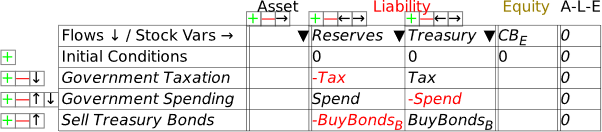

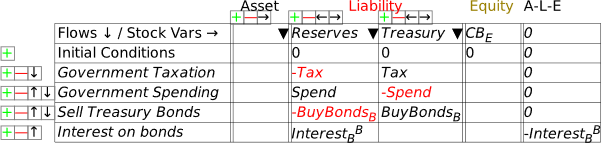

This completes the Banking Sector’s Godley Table, but it leaves the Central Bank’s incomplete—see Figure 36.

Figure 36: The Central Bank’s Godley Table after the Banking Sector’s Table has been completed

The obvious way to complete the Central Bank’s Table is that the proceeds from the sale of Bonds tops up the Treasury account: see Figure 37.

Figure 37: The Central Bank’s Table with the sale of Bonds fully recorded

This shows the real purpose of bond sales, from the Government’s point of view: they enable the Treasury’s account at the Central Bank to avoid going into overdraft. If the revenue bond sales (BuyBondsB here) equals , then there’s no change to the balance in the Treasury account from running a deficit.

What bonds certainly are not is borrowing money from the banks in the way that individuals do when they take out a mortgage. When you take out a mortgage, it’s because you haven’t got the money needed to do what you want to do—buy a house. If you don’t get the mortgage, you can’t afford to buy the house.

But the government has already created the money it needs to do whatever its proposed activities are by running the deficit itself. Secondly, the Reserves that are used to buy the bonds were created by the government running a deficit. If the deficit didn’t exist, then (at least initially—there’s a change coming when we consider interest payments on bonds) the banks wouldn’t have the funds needed to buy the bonds.

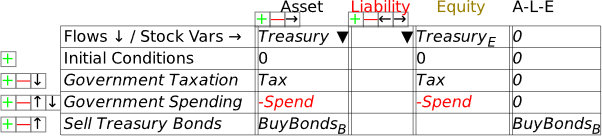

The final step in recording the impact of the bond sales is to add BondsB as a Liability of the Treasury. Open the Treasury’s Godley Table and it will look like Figure 38. Click on the wedge below Liability, and the drop down will show BondsB as an Asset (for the Banking Sector) that hasn’t yet been recorded as some other entity’s Liability.

Figure 38: Treasury Godley Table before BondsB is recorded as a liability

Select BondsB and Minsky automatically balances the table: see Figure 39.

Figure 39

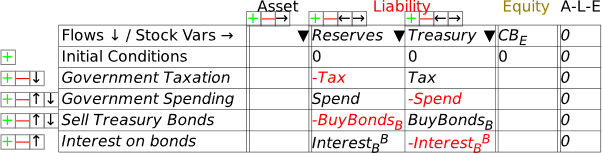

To complete modeling bond sales to banks, we need to include the payment of interest on those bonds. In Figure 40, I’ve labelled this InterestBB—the subscript is there to indicate that it’s interest on bonds, the superscript to indicate that it’s paid to the banks, to distinguish it from interest paid to the public when, at a later stage, banks sell some of these bonds to the public. A superscript is entered into Minsky using the ^ character, which is normally the Shifted character on the 6-key on your keyboard (So the string you type into the cell is Interest_B^B).

Figure 40: Payment of interest to banks on Treasury Bonds

I’ve already made the obvious deduction that this interest payment increases the equity of the banking system—which is one obvious reason that, when the Treasury offers to sell bonds to the banking sector, the offer is always taken up. To do otherwise is to turn down an offer to turn a non-tradeable, non-income-earning asset—Reserves—into a tradeable, income-earning asset—Bonds. To complete the model at this stage, we now need to add this flow to the Central Bank’s and the Treasury’s Godley Tables. When you open up the Central Bank’s Godley Table, it will look like Figure 41: the addition to Reserves is already shown, but the second balancing entry is still needed.

Figure 41

The obvious thing is that the interest payments come out of the Treasury’s account. Make the entry in the Treasury column, and you have Figure 42.

Figure 42

This change in turn cascades into the Treasury’s Godley Table now—see Figure 43.

Figure 43

The obvious closure of this entry is that paying interest reduces the Treasury’s equity—and by precisely as much as it increases the equity of the banking sector. So just as a deficit creates net financial assets for the non-bank public (by crediting their deposit accounts with more money from government spending than is removed by taxation), the interest payments create net financial assets for the banking sector—see Figure 44.

Figure 44

So how does the Treasury pay the interest? In practice, there could be many methods. What I’ll model here is the most sensible for a currency-issuing government: it borrows from the Central Bank.

In practice, this is forbidden in most countries, by legislation that prevents the Treasury borrowing directly from the Central Bank. However, the same outcome can be achieved in a two-step process: the Treasury sells bonds to the private banks to the value of the interest on outstanding bonds, and the Central Bank then purchases these bonds from the private banks on the secondary market.

If you’ve followed me this far, you should be familiar with the steps needed to show this: we add an Asset to the Central Bank’s Godley Table—LoansCBT (which uses another of Minsky‘s formatting tricks: encase the characters CB in a pair of curly brackets——and Minsky subscripts the two characters together), and use to show the actual loans. Figure 45 shows the entries on the Central Bank’s table (with the actual entry of the text string into the Treasury column, before Minsky formats it).

Figure 45

If the loan from the Central Bank to Treasury equals the interest payments on the bonds, then the Treasury’s account at the Central Bank can avoid going into overdraft. It doesn’t change the aggregate picture: the Treasury’s negative equity from the deficit creates positive equity for the non-bank public, while interest payments on bonds creates negative equity for the Treasury and identical positive equity for the banking sector.

Even without attempting to build a mathematical model, this exercise in laying out the structure of the financial system eradicates a lot of popular myths in mainstream economic thinking:

- A deficit doesn’t take money from the public—in the sense of the government borrowing from the public to finance its deficit—but actually puts money into the hands of the public;

- The deficit creates Reserves for the banking sector, and those Reserves are what banks later use to buy government bonds;

- The deficit creates net equity for the non-bank public, while interest on government bonds creates net equity for the banking sector.

This symmetry—that a deficit for the government means a surplus of precisely the same magnitude for the non-government sectors—is apparent in Figure 46. The sum of the non-bank Public’s and the banking sector’s net equity position is ; this is the opposite of the Government’s net equity .

Figure 46: Full system with bond sales to banks

The final two steps to show to cover the fundamentals of fiat money are sales of bonds by the banking sector to the public, and purchases of bonds by the Central Bank from both banks and the public. Figure 47 shows the full system—which, if you want to learn how to use Minsky, you should try to complete for yourself. It needs:

- Two additional stock variables—BondsCB for bonds owned by the Central Bank, and BondsP for bonds owned by the public;

- The relevant flows for these stocks: sales of bonds by the Banking Sector to the Public, SellBondsP; purchases of bonds by the Central Bank from the Banking Sector, BuyBondsCBB; and purchases of bonds by the Central Bank from the Public, BuyBondsCBP.

As with the previous stages of this exercise, several insights can be gleaned from these Tables that contradict widespread beliefs about government money creation. One of these is even something that I used to believe—that money is only created to the extent that the Central Bank buys government bonds. But in fact, Central Bank purchases of Treasuries are irrelevant to money creation, and indirectly slightly reduce the amount of money created, because they reduce payments of interest to the banking sector and the non-bank public to whom banks have sold bonds they purchased in the primary bond auction (in practice, these bonds are normally purchased from banks by non-bank financial institutions).

Figure 47: Full MMT system with bond transactions between Treasury, Banks, Central Bank and the Public

The reason why Central Bank bond purchases from the banking sector don’t affect the amount of money created by a deficit is apparent in the second table in Figure 47: the purchase reduces the monetary value of the bonds held by banks, and replaces them by an equivalent value of Reserves. The Banks would hope to make a trading profit out of this sale, but the sale itself simply swaps one Asset for the Banks (Bonds) with another Asset (Reserves). In practice, this reduces the process of the Treasury selling bonds to the banks in the first place: it replaces Bonds with Reserves. It is therefore irrelevant to money creation, because since the level of Assets remain constant, so too do Liabilities and Bank Equity.

This is an important general point that will recur frequently in this book, and when building models using Godley Tables: for money to be created, an operation must affect both the Asset and the Liability/Equity sides of the Banking Sector’s ledger. Central Bank bond purchases from the Banking Sector only affect the Asset side, and therefore are irrelevant to money creation. The only effect they do have is to reduce money creation slightly, because the Treasury will no longer pay interest to the Banks on these bonds.

On the other hand, Central Bank purchases of Bonds from the public do create money: the sale of the Bonds credits both the public’s deposit accounts at banks, and the reserve accounts of the banks.

Conversely, the sale of bonds by the Banking Sector to the non-bank Public destroys money: the Public’s deposit accounts fall and their holdings of Bonds rise. But even in this case, the money being destroyed was initially created by the deficit itself: only if all the bonds initially purchased by the banks from the Treasury at the bond auction were sold to the public would the actual creation of money by the deficit fall to zero.

That covers government money creation. Now let’s turn to private money creation by the Banking Sector.

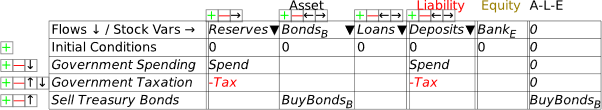

- Credit Money

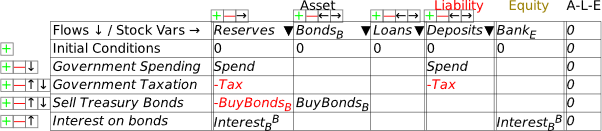

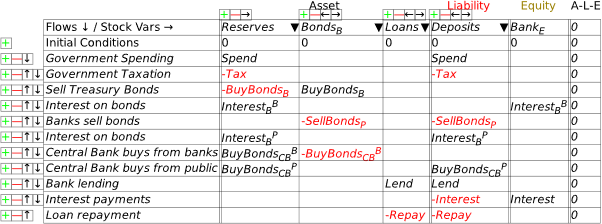

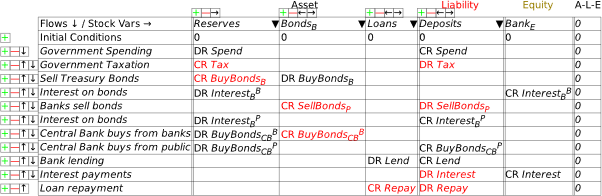

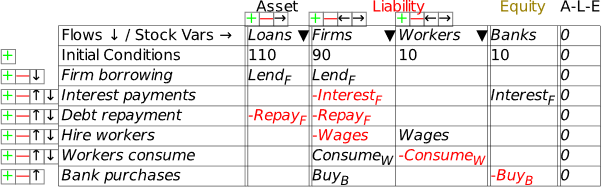

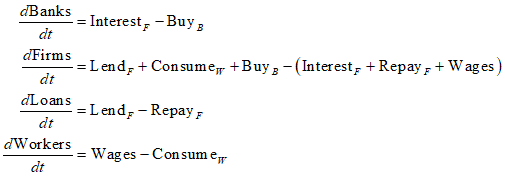

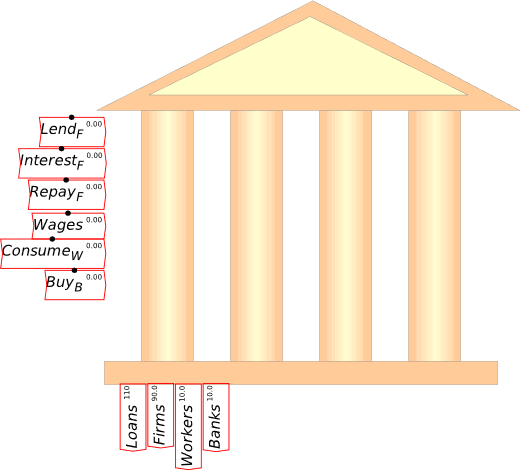

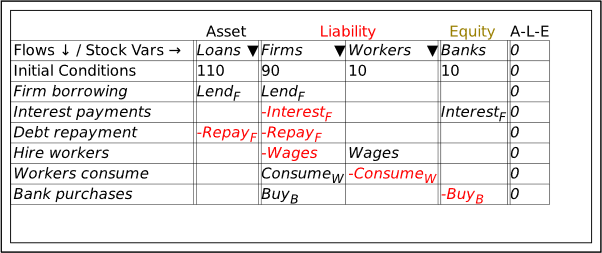

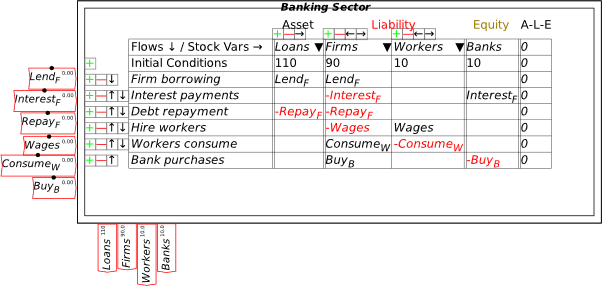

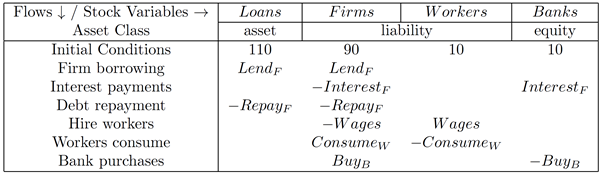

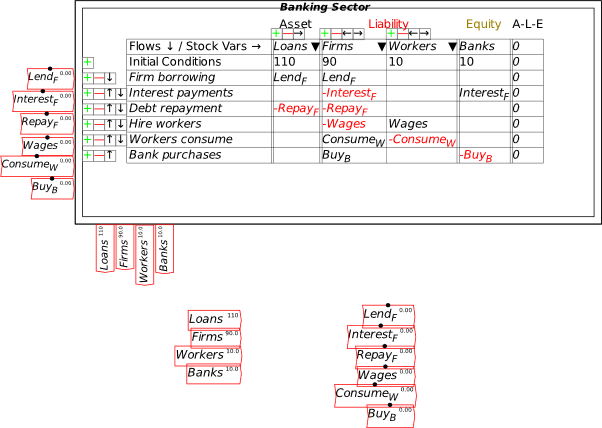

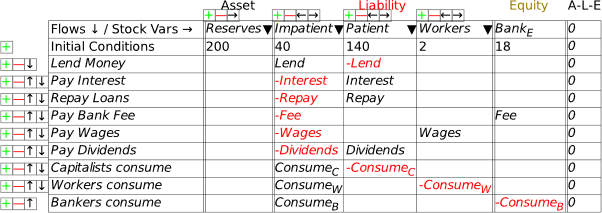

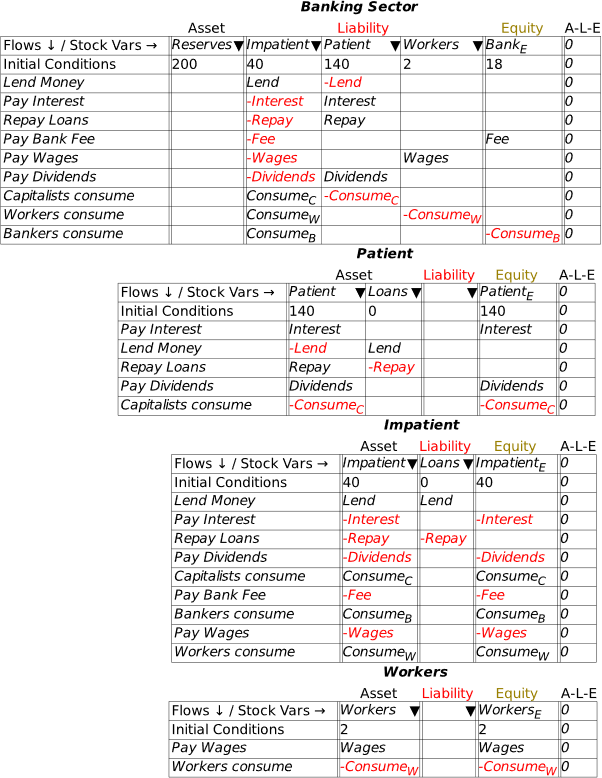

I’m now assuming that you have some fluency with Godley Tables—you have been following my explanation by reproducing these tables in Minsky yourself, haven’t you?—and so I’ll just cut to the chase, and enter the three necessary operations for private money creation in one go: new loans by banks, paying interest on loans, and loan repayment by bank customers. Use the words “Lend”, “Interest”, and “Repay” for these flows, and make the entries so that the checksum column always sums to zero (Figure 48).

Figure 48: The basic operations of fiat and credit money from the Banking Sector’s point of view

As an aside, if you have a background in accounting, you may prefer to see Minsky‘s operations using DR and CR rather than plus and minus entries. You can engage that from the Options menu on the Table: choose “DR/CR Style”. Then the model in Figure 48 will look like Figure 49 (I prefer the plus/minus approach, so that’s what I’ll stick with from now on).

Figure 49:Figure 48 in DR/DR style

Following the general principle noted just above, that to create money, an operation must add to both the Asset and Liability/Equity sides of the banking sector’s balance sheet, it should be obvious that lending creates money while repayment destroys it. This simple fact is ignored by the mainstream model of lending, known as Loanable Funds, which treats banks as “financial intermediaries” that take in deposits from one set of customers (“Patient people”, to use Paul Krugman’s non-pejorative term) and then lends them out to other people (“Impatient people” in Krugman’s lexicon).

I’ll spend a lot of time on the macroeconomic impacts of private money creation in Chapter 8. For now, without writing a single equation, we’ve come up with a picture of the monetary aspects of a mixed fiat-credit money system that contradict the conventional wisdoms promulgated by economics textbooks and mouthed by politicians.

If you’ve followed the argument here to date—especially if you’ve done so by reproducing the model in Minsky for yourself—then you’re well on the way to understanding the monetary dynamics of capitalism. I’ll repeat a lot of the points here in subsequent chapters, but with the addition of defining a mathematical model, rather than stopping at laying out the balance sheets, as I do here.

- A significant extension: Nonfinancial Assets

One weakness of Minsky was that it handled only assets which are also liabilities: something like a deposit account, for example, is an asset for the depositor, and a liability for the bank. But there are also assets—such as a house, gold, the market value shares (a share is only a liability for the issuing company up to its issue price), cryptocurrencies, etc.—which are assets of the holder, but a liability of no-one. These go by the general moniker of “non-financial assets”.

This term is a bit misleading, since, in most people’s eyes, things like houses and precious metals are very much financial assets. However, they are not “at call”: your house might be “worth” $2 million in the current market, but to realize that valuation you’re going to have to sell it, which could take 6-18 weeks even in a buoyant housing market.

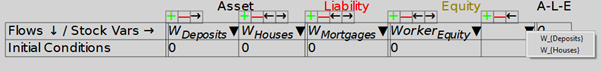

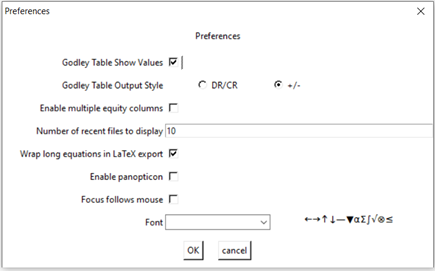

We have just added a means to handle such assets: you can define an Asset for a particular Godley Table as an Equity for that same Godley Table, once you have enabled multiple Equity columns (this is an option on the Options/Preferences main menu, and on the menu for Godley Tables). Once you have recorded some assets for a given entity on its Godley Table, the wedge dropdown on the Equity column will show assets on that same Godley Table that have not yet been allocated to another Table’s Liabilities.

Research alert: since we’ve just added this feature, and we are still fine-tuning it, I haven’t personally explored its implications yet. I believe, but I don’t yet know, that it will enable the modelling of (a) the “ab initio” creation of a monetary system, complete with the initial formation of banks, and (b) asset price booms and busts, and how they are generated and fuelled by the banking sector. Since these are very important topics that have been discussed but, to my knowledge, have not been modelled, these could both be excellent topics for a PhD.

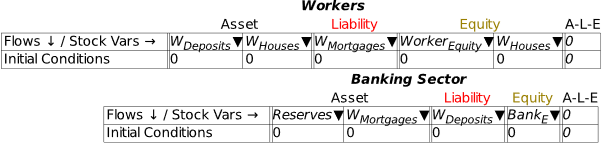

Figure 50 shows using this feature: the Asset WHouses is obviously an Asset that has no balancing Liability, while WDeposits is obviously also a Liability of the banking sector.

Figure 50: Dropdown Wedge on Equity column now shows unallocated Assets on the same Godley Table

Notice that WDeposits turns up twice on the Workers Godley Table in Figure 51.

Figure 51: Non-financial assets in a simple model

This feature should support modelling everything from the ab initio creation of banks in a fiat-credit money system (since banks were often established on the basis of ownership of land by their founders) to asset bubbles and their denouement in crashes—though to model all this will require a lot of additional work. But the basic structure needed to do this now exists in Minsky. I’ll sketch the basics of both topics and leave taking this further as an exercise for the reader.

- Ab initio creation of banks

Modern Monetary Theory describes the functioning of an existing monetary system—consisting of a government with a Treasury, a Central Bank, and its own currency, a private banking system, and the non-bank public with deposit accounts at the private banks. One common and correct defense of the MMT proposition that a government spends first and taxes later is that, when a currency is first created, it must be spent into circulation before it can be taxed back. This pre-supposes the existence of banks at the time, but how can they come into being with the key prerequisite of having Assets that exceed their Liabilities?

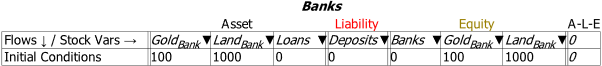

Nonfinancial assets provide the answer: banks are formed by wealthy people pledging various assets of theirs to the bank, so that it starts with positive nonfinancial assets. You can imagine something like the situation shown in Figure 52: a bank’s founders form a company and pledge various assets to the bank, so that it starts with an amount of nonfinancial assets—showing gold and land here—that give it net positive equity.

Of course, this involves someone valuing these assets (which are denominated in weight and area respectively) in terms of the new currency. That “someone” will be the ruler or political system establishing the currency—King Offa in the example I give in Manifesto—so there is, as usual, a foundational role for the government in establishing a financial system in a fiat money world. This “ab initio” situation is shown in Figure 52.

Figure 52: “Ab initio” formation of banks

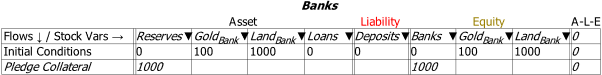

Next, the bank would pledge these assets as collateral to back the bank. These would be valued at a discount—in Figure 53 I show this as a roughly 10% discount, but it would surely be larger in practice. As noted earlier, Minsky doesn’t support using constants like 1,000 as flow entries in Godley Tables. I’ve cheated here by typing 1000 inside parentheses, which is a LaTeX way of typing a string of characters: .

Figure 53: Nonfinancial assets pledged at a discount in return for State-issued currency

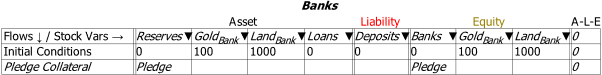

Swapping back to the Minsky convention of using variable names for flows, I’ll call this State-valuation of the nonfinancial assets backing the bank “Pledge”:

Figure 54: The same as Figure 53, but with the variable “Pledge” replacing the constant value 1000 in Figure 53

This is a feasible way to show how propertied interests can turn control over physical resources into the basis of a private monetary system. It would be relevant to the actual historical practice of “Free Banking” in the 19th century {Rockoff, 1974 #1171;Economopoulos, 1988 #1132;Flanders, 1996 #1161;Hickson, 2002 #1153;Lakomaa, 2007 #1157;Bodenhorn, 2008 #1146}, and also the logical “ab initio” proof of MMT’s assertion that government spending precedes taxation. Part of that process requires banks that can purchase government bonds (if we try to build a model that is congruent with current practices, where bonds are issued to avoid a Treasury overdraft at the Central Bank); this additional feature of Minsky helps show how that could happen. The State valuation of the nonfinancial assets pledged as collateral to establish a private bank gives the private bank both positive equity in financial assets, and the excess Reserves that will be needed to buy the bonds issued to cover the initial government deficit.

There is much more work required to this complete this model, but I’ll leave it at this level and invite research students to consider taking the concept further.

- Financial Assets and Bubbles in Nonfinancial Asset valuation

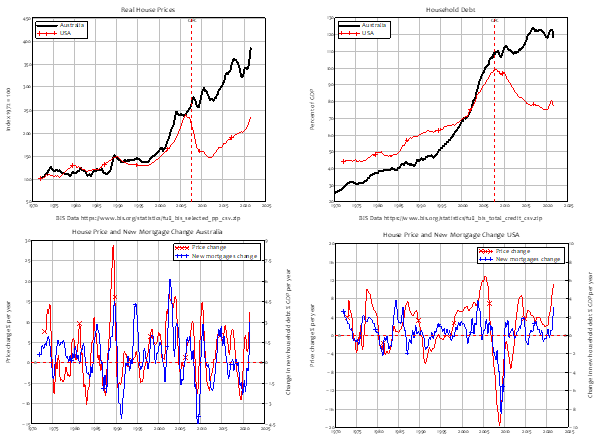

A more contemporary problem is the role of financial assets—fundamentally, bank debt—in determining the value placed on nonfinancial assets—primarily, housing. The empirical link is obvious—and as usual, is ignored by Neoclassical economists. The causal relation is easily inferred from the facts that (a) the supply of housing is very inflexible, so the volatility in the housing market comes from the demand side, rather than the supply side; (b) the demand side is dominated by mortgage credit—new mortgage debt—since houses are overwhelmingly purchased with borrowed money; (c) if you divide new mortgage debt by the average house price, you get a measure of how many “average” houses can be purchased; (d) given the inflexible supply, there is a strong relationship between mortgage credit (new mortgage debt) and the house price index; (e) there is therefore a relationship between change in mortgage credit and change in house prices.

This relationship is apparent even in countries with very different house price and household debt histories, like the USA and Australia. The USA had a famous boom and bust in house prices, the “Subprime Crisis” {Silipo, 2011 #3925;Kaboub, 2010 #3922;Dymski, 2010 #3937;Wray, 2008 #3785;Bernanke, 2007 #5578} that led to the “Great Recession”. Australia, on the other hand, sailed through the “Global Financial Crisis”—as the “Great Recession” is known outside of America—with only a small dip in house prices, which are now 4 times as high, in real terms, as they were in the 1970s (in the USA, they are “only” 2.5 times as high). When you plot house prices in Australia and the USA against each other (the top left plot in Figure 55), you see two very different patterns: effectively always-rising prices in Australia; a boom, bust, and then rising prices once more in the USA.

Household debt also follows a very different pattern in both countries. Household debt in the USA rose strongly until 2008, and has fallen ever since—though with a slight blip at the beginning of the Covid-19 crisis. However, in Australia, household debt, like house prices, just keeps on rising (the top right plot in Figure 55).

However, when you plot the change in household credit against the change in house prices—the two bottom plots in Figure 55) you get a very similar pattern: rising house prices goes with rising household credit, and falling house prices with falling household credit.

Figure 55: House prices, household debt & the “credit accelerator” in the USA & Australia

The link between rising household credit and rising house prices is therefore obvious: the question is, how to model it? In the Appendix, starting on page 255, I cover the mathematics of the relationship between rising household credit and rising house prices; here I show the stylized relationships between change in financial assets and change in the valuation of houses, using this new feature of Minsky.

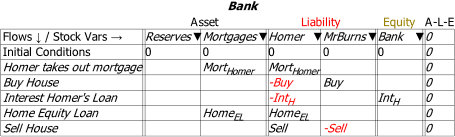

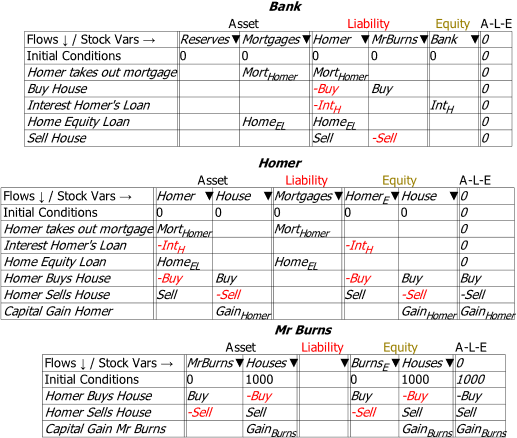

Imagine that Homer Simpson wanted to buy a house off the real estate magnate Mr Burns. Homer first has to take out a mortgage with the bank, pay the money needed to buy the house to Mr Burns, pay interest on the mortgage (and principal, but I’m omitting that to focus on the nonfinancial assets issue here), maybe take out a Home Equity loan in his later years, and then sell the home at the end of his life. These transactions are shown in Figure 56.

Figure 56: The Bank and financial-assets-only view of house purchase and sale

This view shows the changes in financial assets and liabilities, but omits changes to the distribution and valuation of the nonfinancial asset which is the subject of the transaction—the house. These are shown in Figure 57, in the final three rows of the Godley Tables for both Homer and Mr Burns.

The purchase of the house by Homer—the 3rd last row of his Godley Table—converts an amount of dollars worth of financial assets (Homer’s bank account) into an identical valuation of a nonfinancial assets (the house). If the purchase price was $450,000, Homer’s bank account declines by $450,000, and he then owns a house with an initial valuation of $450,000. The reverse applies to Mr Burns—the 3rd last row of his Godley Table. Similarly, the eventual sale of the house (from Homer back to Mr Burns in this simple example) does the reverse. This will be at a different price to the original purchase however—so there will be a change in the value of the house when it is sold that will turn up as a capital gain or loss. In this case, a gain for Mr Burns will be an identical loss for Homer. The question to be explored in a proper model is what causes the change in valuation—which will come down to the dynamics of mortgage debt (and demographics and other issues) explored in the Appendix.

With respect to Minsky‘s internal logic, the interaction of nonfinancial with financial assets means that the rule no longer applies. If house prices are rising, then Homer makes a capital gain, which captures the reason that people get caught up in asset bubbles in the first place: it’s a way to escape the trap of net financial assets summing to zero, by stepping into another trap of asset price bubbles and private debt.

Figure 57: The full transaction set, including changes in nonfinancial assets (house ownership & valuation)

As this example is set up, there is no aggregate capital gain: Homer’s gain, should he be so lucky, will be Burn’s loss. This illustrates that the source of collective capital gain over time—the sort of increase in the aggregate valuation of houses and share (and cryptocurrencies) during asset price bubbles—must lie elsewhere. In the case of housing, it is in the rising aggregate level of mortgage debt, as both the correlations shown in Figure 55, and the logical argument made in the Appendix illustrate. There would, I expect, be an interesting PhD thesis in taking this financial-nonfinancial asset valuation issue further.

Many other monetary questions can be answered simply by posing them in Minsky‘s unique Godley Table structure. However, to fully exploit Minsky‘s capabilities, you need to understand how to use the program to build dynamic simulation models. That is the topic of the next and subsequent chapters.

Note: This feature is still under development, and there are some issues we’re still not sure of. For example, looking at the sale of the house to Homer by Mr Burns, Burns’s equity changes form from a house valued at $X, to $X in the bank. So, in aggregate form, the sum is zero. But in terms of Mr Burns’s stock of houses to sell, the operation results in a fall of dollars in terms of the valuation of his stock of houses—and this is shown both in the specific Equity column Houses, and in the sum for that row.

The Equity columns show the correct dynamics: the rate of change of Burns’s financial equity from the transaction equals dollars per year, and the rate of change of his nonfinancial equity is dollars per year. As to what the A-L-E column should show? We’re still not sure.

This feature will develop, and questions it raises solved, as we release new betas. This is another reason to support Minsky’s development via its Patreon page https://www.patreon.com/hpcoder.

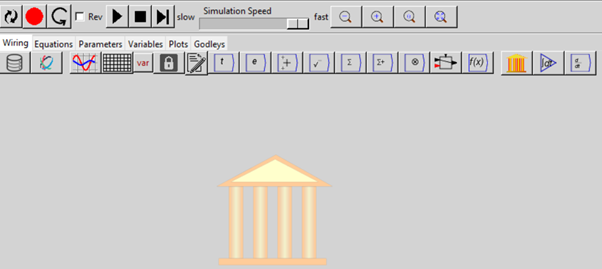

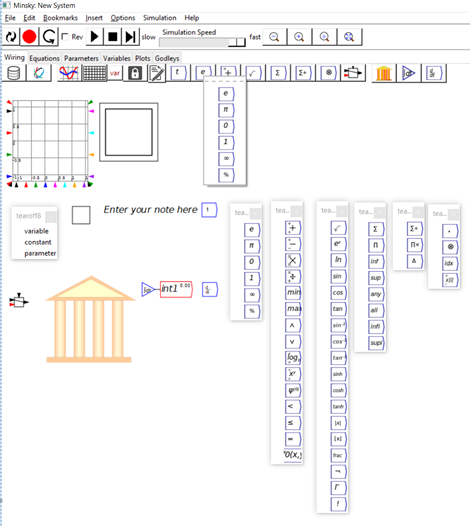

- The User Interface

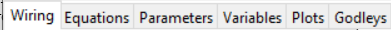

Figure 58 shows how Minsky appears when you first run the program.

Figure 58: Minsky’s interface

Minsky‘s user interface has five main components:

- The menu bar, with options File/Edit/Bookmarks/Insert/Options/Simulation/Help;

- The simulation control toolbar with tools to reset a simulation, run it, stop it, step through it, change the speed of the simulation, reverse its direction (simulate backwards in time rather than forwards), zoom out/in/reset/full scale, record the construction of a model, and replay its construction;

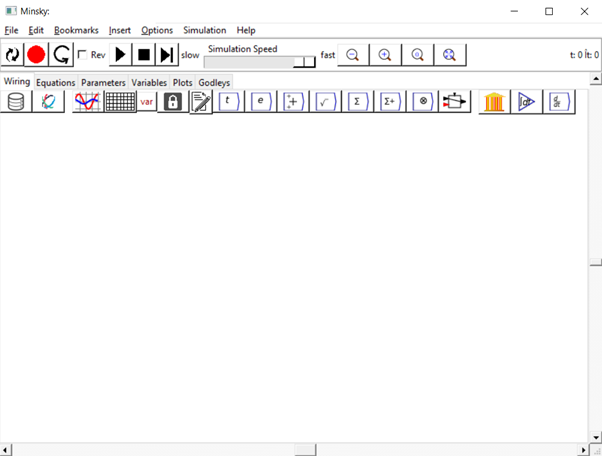

- Tabs for various aspects of the user interface. The main tab is Wiring, where you lay out your model using the visual design elements in Minsky; Equations shows the equations generated by your model; Parameters shows the names and values for model parameters; Variables lists the definition of the variables in a model; Plots shows selected graphs from a simulation on a separate canvas; and Godleys shows the double-entry bookkeeping tables used to build the financial aspects of any model you construct;

- The Toolbar for designing a model. From left to right, the tools: import data; attach data to a Ravel (a commercial extension to Minsky); insert a plot; insert a spreadsheet; from a drop-down menu, insert either a variable, a parameter, or a constant; lock an operation (so that the locked variable doesn’t change when the model is altered); insert a text note; and insert a time widget. The next six icons activate a series of drop-down menus to insert mathematical operators on the canvas. Finally, there is a logical switch operator, the Godley Table icon, integral block icon, and differential operator;

- And finally, the design canvas, where the contents depend on which Tab is active—see Figure 59. The main Wiring tab presents you with a design surface that is 100,000 by 100,000 pixels large—in terms of modern computer screens, that’s equivalent to an array of 4K monitors 25 monitors wide and 50 monitors deep—each with 4,000 pixels horizontally and 2,000 pixels vertically. You are unlikely to design a model that uses even 1% of that design space, but the room is there if needed to build truly gargantuan models.

Figure 59: Minsky’s interface, open on the “Wiring” Tab.

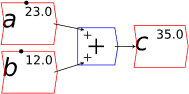

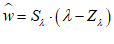

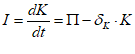

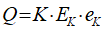

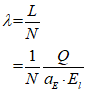

You will spend most of your time on the Wiring Tab when designing a Minsky model. As is standard in system dynamics programs, you create equations using wires that connect one or more entities to each other. A simple equation like, for example,  , looks like this in Minsky:

, looks like this in Minsky:

Figure 60: A simple equation in Minsky

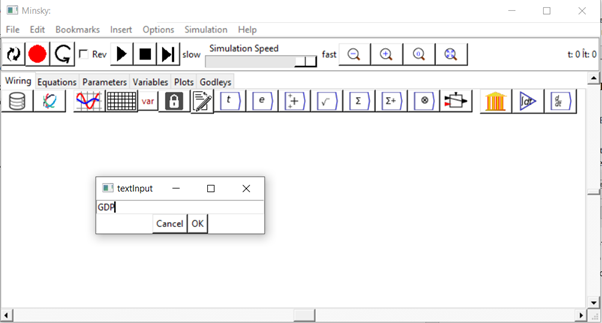

We have endeavoured to make entering equations as easy as possible, so you can just type anywhere on the canvas to add a variable to your model. For example, if you wish to define GDP, you can simply start typing “GDP” on the canvas. When you hit the “G” key, the “textInput” dialog box will pop up, where you can complete typing the expression: see Figure 61.

Figure 61

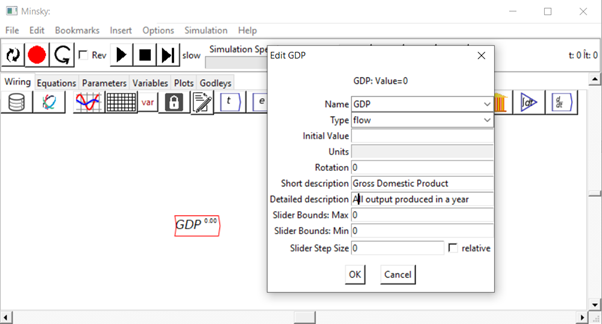

When you press the Enter key, or click on “OK”, the variable GDP will be entered on the canvas, and the Edit dialog box will pop up where, if you wish, you can give it an initial value, specify its units, give it a short description, etc.—see Figure 62.

Figure 62

You can also change its type, from “flow” to “parameter”, “constant”, “integral” or “stock” (we’ll meet the latter two types in the next chapter). Parameters differ from flow variables by (a) having a different colour (blue rather than red) and (b) having only an output, whereas flow variables have both an input and an output.

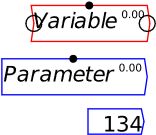

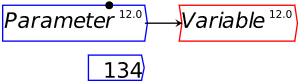

You can see the input and output ports if you put your mouse pointer above an object on the canvas. These are circles on the right and left ends of a Variable, and the right end only of Parameters and Constants—see Figure 63, where my mouse pointer was hovering over Variable, so that both its input and output ports are visible.

Figure 63: Variables, parameters, and constants

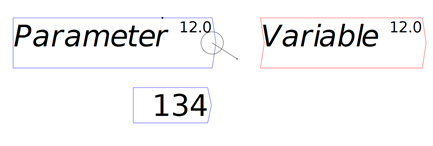

If you click anywhere apart from inside one of these circles, then you can drag the entity to somewhere else on the canvas. If you click inside one of the output circles—those on the right-hand side—then a “wire” will come out of it, which will attach to the nearest input port (you don’t have to click on an input port precisely)—see Figure 64, where I’ve started dragging a wire out of the output port from Parameter towards the input port for Variable.

Figure 64: Wire being drawn out of output port

When you release the mouse button, the wire “snaps” to the nearest input port, which is that for Variable—see Figure 65. From now on, Variable‘s value will be whatever Parameter‘s value is.

Figure 65: Parameter output wired to Variable input

Of course, you’ll want to use mathematical operators to create more complicated definitions, and in Minsky you can simply type simple mathematical operators—addition, multiplication, division and subtraction—directly onto the canvas: you don’t have to use the drop-down menus on the icon bar.

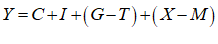

Let’s see what the equation for GDP looks like in Minsky, using the standard symbols economists use:

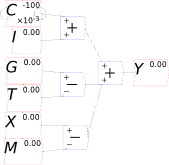

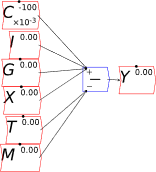

In Minsky, this looks like Figure 66:

Figure 66

You will notice one unusual thing about Figure 66: there are two inputs to the bottom input port of the “+” key that defines Y. This is a common theme in Minsky, called “overloading”: if an operator can sensibly accept more than one input, then it does. The reason we do this is that system dynamics diagrams—which are effectively flowcharts that map across to equations—can get very messy, with lots of wires which can ultimately produce a “spaghetti diagram” effect. We aim to minimize clutter on the canvas, so you can replace the four addition and subtraction operators in Figure 66 with just one, as shown in Figure 67.

Figure 67

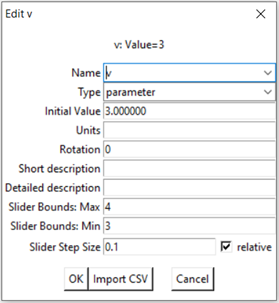

You may also have noticed the black dot on top of the Variable and Parameter blocks. This enables you to change the values of a parameter during a simulation. There are two ways to do this: by using the mouse to drag the dot to the left to reduce the value, and to the right to increase it; and by pressing the up key to increase the value, or the down key to reduce it, while the mouse cursor is hovering over the parameter. The maximum, minimum and step size are all set on the Edit dialog box—see Figure 68.

Figure 68: The edit dialog box for v, showing the slider Max, Min and Step Size

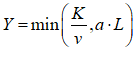

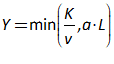

For example, you might use a “Leontief” production function, where output Y is defined as minimum of the capital stock K divided by a capital-output ratio , and an output—to-labour ratio times labor L:

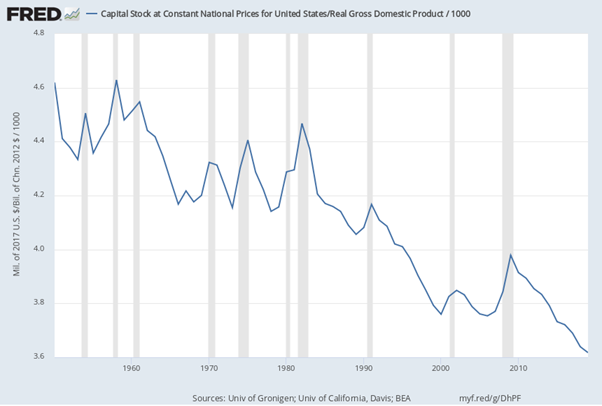

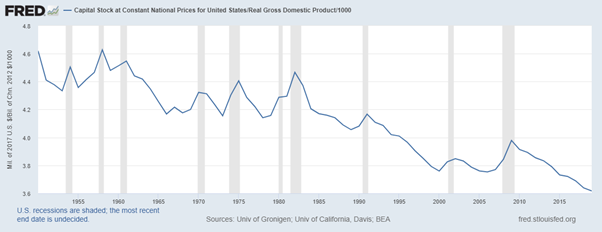

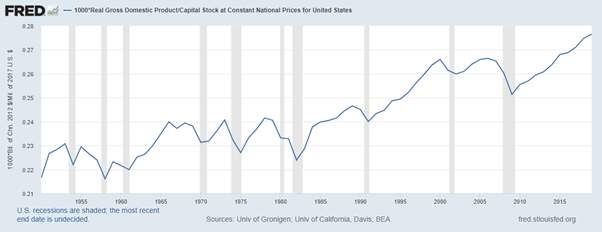

Post-Keynesian models generally treat the capital-output ratio as a constant with a value of between 2 and 4. However, economic data implies that this is a variable with a decreasing trend over time (within a very small range), and that it rises during recessions—see Figure 69.

Figure 69: Capital stock at 2017 prices divided by GDP at 2012 prices (www.myf.red/g/DhPF)

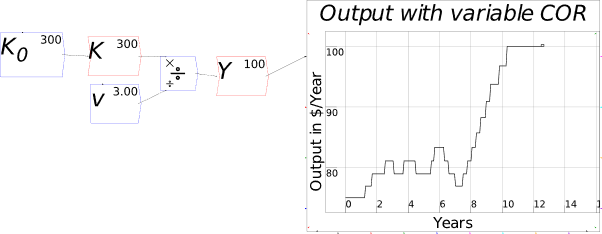

I’ll explain what the capital-output ratio (COR) actually is, and give an explanation for this trend, in the Energy chapter. For now, this implies that the practice of treating the ratio as a constant is generally defensible—the range is small, and the measurement of capital stock is compromised anyway (Sraffa 1960; Pasinetti 1969; Harcourt 1972)—but it would be wise to be able to vary the parameter and see what happens. Figure 70 shows the effect of varying the value of v from 4 to 3 during a simulation.

Figure 70: Output with varying capital-output ratio

- Text Formatting

Minsky supports text formatting, including Subscripts, Superscripts, and Greek letters , etc., using the LaTeX mathematical formatting conventions. The basic formatting codes are:

- Underscore _, which subscripts the next character;

- Caret ^, which superscripts the next character;

- Brackets , which apply the underscore and caret to a string of characters; and

- Backslash \, which initiates a Greek character, using the English-language expression for the Greek letter. So typing \lambda into the text input dialog box and pressing Enter will generate the Greek letter .

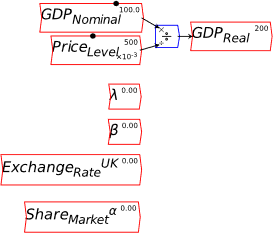

For example, if you wish to distinguish Real GDP from Nominal GDP, you can create variables GDPReal and GDPNominal using these conventions. This improves the readability of the model, compared to standard text-only systems, which to my knowledge are all that are provided by the other system dynamics programs. Figure 71 shows some examples of LaTeX formatting in Minsky.

Figure 71: Some examples of LaTeX formatting in Minsky

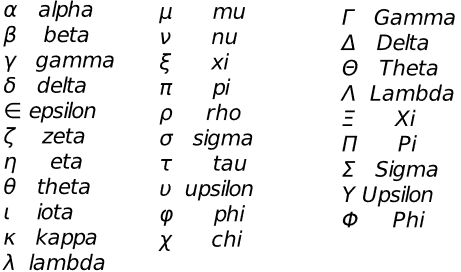

Figure 72 shows the most commonly used Greek characters supported by Minsky, and the English word that LaTeX displays as a Greek letter if you precede it by a backslash key (\).

Figure 72: A partial list of Greek characters supported by Minsky & the English word used for it

- Multiple copies of variables and parameters

Once you’ve defined a variable or parameter, you can copy it and use it anywhere else on a diagram. So, for example, if you use the Greek letter lambda to indicate the employment rate, then you can make a copy of and use it elsewhere in your model as an input to a wage determination model—a so-called “Phillips Curve”.

- A Keen Rant: Rehabilitating Bill Phillips

Before I illustrate building a Phillips Curve in Minsky, it’s important to rehabilitate the reputation of the man behind the name of the curve, the New Zealand engineer-turned-economist Bill Phillips.

Few people have been as badly misrepresented by Neoclassical economists as Bill Phillips: a courageous and innovative man has been reduced to a caricature of the empirical study he undertook over one weekend, to validate a hypothesis he made about a nonlinear relationship between the intensity of economic activity and the rate of change of input prices (Phillips 1958). Frankly, the Neoclassical caricature of Phillips is probably worse than their caricature of Keynes (Hicks 1937).

At least with Keynes, Neoclassicals couldn’t completely ignore his outstanding contributions to the politics and economics of his time. As a leading civil servant, Keynes attended the Treaty of Versailles, witnessed its distortion by France into a means to destroy its long-standing enemy Germany, and raised the alarm that the Treaty’s onerous terms would almost certainly lead to another war in The Economic Consequences of the Peace (Keynes 1920). He was a scion of English society, and while Hicks’s IS-LM model eviscerated Keynes’s General Theory (Keynes 1936), it didn’t eviscerate the man himself.

Phillips, on the other hand, had a unremarkable birth as the son of a New Zealand farmer, trained as an engineer, and spent most of WWII in a Japanese prisoner-of-war camp. But in that camp, among many other outstanding deeds, he risked his life to fashion a radio out of parts he stole from the commandant’s office, so that his fellow prisoners could hear British and American news reports on the progress of the War, rather than merely being force fed Japanese propaganda (Leeson 1994, pp. 606-608).

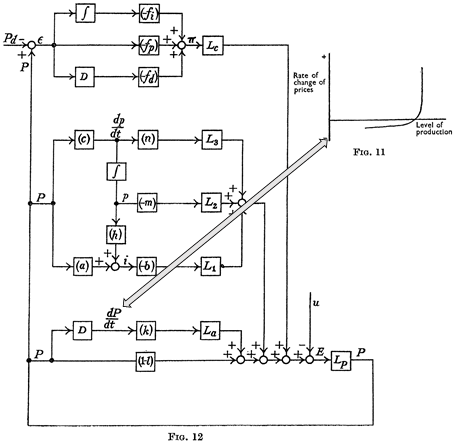

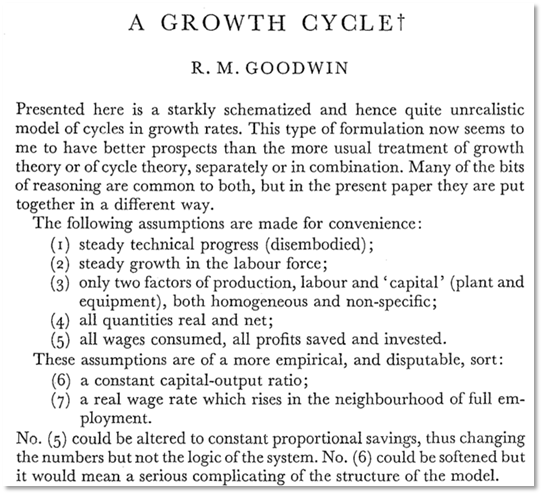

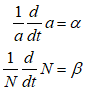

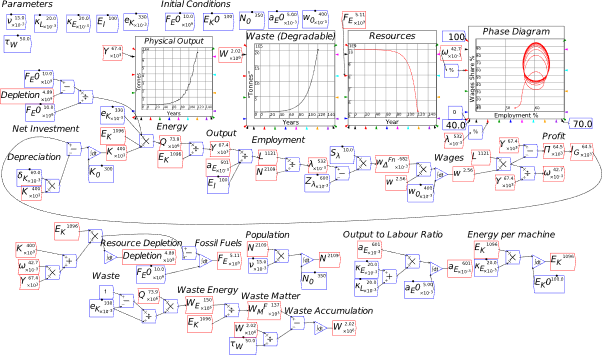

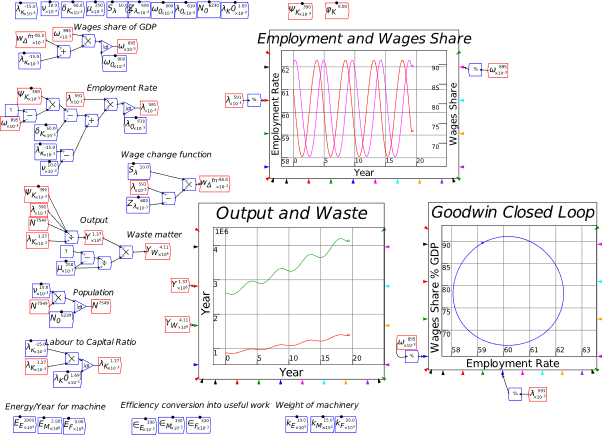

On his release, Phillips decided to use his engineering training to bring economics out of its Dark Ages of equilibrium thinking—using precisely the same modelling techniques that are now used in system dynamics programs like Minsky. The paper from which the model in Figure 73 is taken, “Stabilisation Policy in a Closed Economy” (Phillips 1954), pre-dates Forrester’s initial proposal of system dynamics by 2 years (Forrester 2003 [1956]), and the practical development of system dynamics software by about six years. Phillips was well ahead of his time, and, of course, his innovative work was ignored by mainstream economists.

Phillips’s hypothesized relationship between the level of economic activity and the rate of change of money wages (not prices!) was supposed to fit into the dynamic model shown in Figure 73, where there would not be a simple “trade-off” between inflation and unemployment, as his statistical work was bowdlerized down to, but a dynamic feedback process that would be difficult, though not necessarily impossible, to stabilize.

Figure 73: Phillips’s engineering diagram layout of an economic model with his hypothesized Phillips curve relationship inset (Phillips 1954, p. 309)

- Plots in Minsky

To illustrate the Phillips Curve in Minsky, we need to plot the input (for which I’ll use the employment rate, rather than the unemployment rate) and the output (the rate of change of wages). That requires adding a plot widget to the canvas, and there are two ways to do this: click on the  icon on the toolbar; or press the @ key while on the canvas. We borrow a trick from Mathcad here: the @ symbol “looks like” a plot (use your imagination!; it’s not as obvious as using * for “multiply”, but it will do), so we use that as a keyboard shortcut.

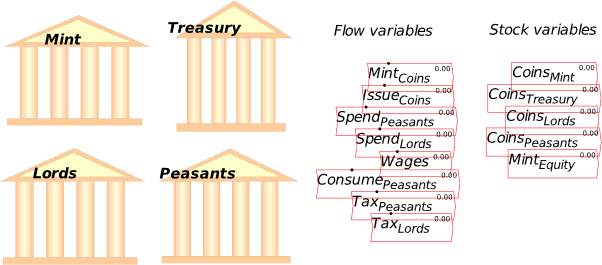

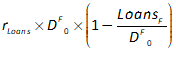

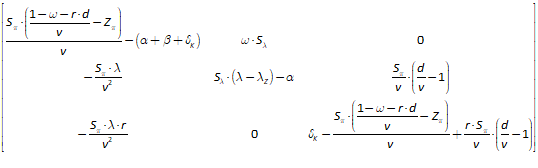

icon on the toolbar; or press the @ key while on the canvas. We borrow a trick from Mathcad here: the @ symbol “looks like” a plot (use your imagination!; it’s not as obvious as using * for “multiply”, but it will do), so we use that as a keyboard shortcut.